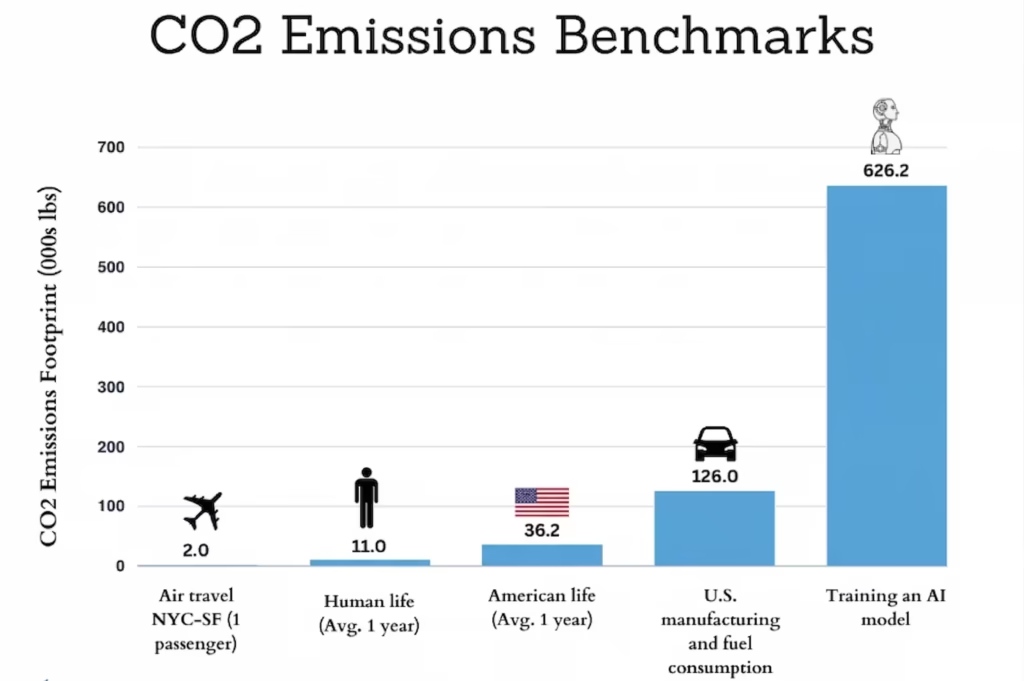

“Training of OpenAI’s GPT-3 released over 552 tonnes of CO₂.” Its AI carbon footprint shows up in articles, X(Twitter) threads, and university presentations everywhere. And actually, it’s a real number, and it matters.

But here’s what everyone misses out: AI Model training happens only once. So what happens after that? Every question you ask ChatGPT, every image you generate on Midjourney, every summary Gemini pulls for you, that runs forever, billions of times a day, across millions of users.

And we’re just getting started, a recent study revealed that how millions of people actually use AI daily is far more intensive than most people assume.

As of now, inference (the technical term for “AI actually answering you”) now accounts for roughly 60–70% of total AI energy consumption globally. The bigger carbon problem isn’t just how AI was built. It’s also how you’re using it right now.

That’s where this article helps you to understand AI carbon footprint, not with a dramatic origin story, but also with what’s actually happening today, and what we can do about it.

Training vs. Inference: The Carbon Lifetime

So What is the Difference Between AI Model Training and Inference, and Why Does It Matter?

What is Training:

AI model training is when an AI model learns like a child. It processes enormous amounts of data, text, images, and code over weeks or even months, using thousands of specialised Nvidia chips running in parallel. It’s really expensive, so much energy-hungry, and AI carbon footprint-heavy. But it happens only once. Build the model, and training is done.

What is Inference:

Inference means every single thing that happens after training, the use of an AI model. Every prompt, every image, every “explain this email to me.” Each request costs a small amount of energy but scale that across hundreds of millions of daily users, and small becomes enormous, fast.

What is carbon debt?

When GPT-3 was trained, it produced approximately 552 tonnes of CO₂. That’s a fixed cost, a debt the model carries from day one. Now imagine that model serves 10 billion queries over its lifetime.

Each query “owes” roughly 0.055 grams just to pay back the training debt, before the energy cost of actually answering your question is even counted.

“It’s fair to be concerned about the energy consumption — not per query, but in total, because the world is now using so much AI… we need to move towards nuclear or wind and solar very quickly.” – Sam Altman, OpenAI CEO(at the India AI Impact Summit, Feb 2026)

Training is the headline. Inference is the bill that never stops arriving. And as AI gets embedded into search engines, office tools, and smartphones, that bill is getting bigger every month.

Which AI Model Has the Smallest AI Carbon Footprint?

This is the question people actually ask a lot, but no one has answered it clearly. So, when we talk about AI’s carbon footprint, we just talk about like AI is one thing, but actually it’s not.

Here’s why ChatGPT, Gemini, Claude, Midjourney, and DeepSeek are fundamentally different in architecture, hosting server infrastructure, and energy sourcing. That means their carbon costs per query are different.

Here’s the closest thing to a model-by-model comparison of generative AI carbon footprint you’ll find anywhere right now:

| AI Model | CO₂ per Text Query | CO₂ per Image | Notes |

|---|---|---|---|

| ChatGPT (GPT-4o) | 0.3–1.0g CO₂e | N/A | Based on third-party energy estimates |

| Google Gemini | 0.03–0.3g CO₂e | Varies | Lower end due to Google’s renewable sourcing |

| Claude (Anthropic) | 0.2–0.8g CO₂e | N/A | Anthropic doesn’t disclose per-query data |

| Midjourney | Minimal text overhead | 2–10g CO₂e | Image generation is far heavier than text |

| Stable Diffusion (local) | Depends on your grid | Depends on your grid | Self-hosted = your electricity = your carbon |

| OpenAI o1 / o3 (reasoning) | 15–100x more than GPT-4o | N/A | Reasoning chains = exponentially more compute |

| DeepSeek R1 (reasoning) | Lower than OAI o1 per token | N/A | More efficient, but still reasoning-heavy |

| Llama 3 (self-hosted) | Entirely depends on host grid | N/A | Open source, your infrastructure = your carbon |

Important thing: these are just estimates built from third-party research, disclosed energy data, and independent modelling. AI companies, with very few exceptions, do not publish official per-query emissions figures.

What this table tells us, even with its imprecision, is that model choice actually matters. DeepSeek R1, for example, made headlines for matching GPT-5 performance at a fraction of the compute, which directly translates to a lower per-query carbon cost than OpenAI’s reasoning models.”

A standard Gemini text query can produce ten times less carbon footprint of AI than a ChatGPT reasoning session model.

Carbon Cost by Task

It’s not about just which model you use, but it’s what you ask it to do.

Here’s something the above model comparison table doesn’t fully capture: the task you give an AI is matters more than the brand you use.

Think of it like driving. A fuel-efficient hybrid still burns more fuel at 140 km/h than a regular car at 60 km/h. The machine matters, but so does how you use it.

The same logic applies to generative AI carbon footprint. Here’s we ranked from lowest to highest carbon cost, here’s roughly how different tasks compare:

- Simple text: summarising, answering questions, translating. The lightest workload. Energy equivalent to a few Google searches per session.

- Standard conversation: back-and-forth chatbot use. Slightly heavier, but still relatively modest.

- Code generation: More computing per output than plain text. Not dramatic, but measurably higher.

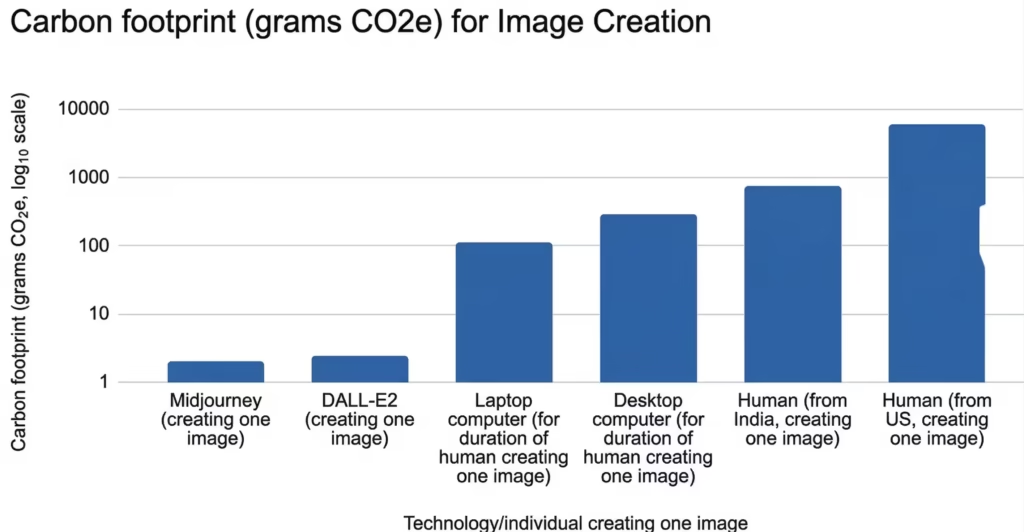

- AI image generation: Midjourney, DALL·E, Stable Diffusion. This is where things jump. Generating one image uses roughly 10–30 times more energy than a text query.

- Reasoning models: OpenAI o1, o3, DeepSeek R1. When a model “thinks through” a problem step by step before answering, it runs more computation dramatically. Estimates put reasoning queries at 50–100 times the energy of a standard text response.

- AI video generation: Sora, Runway, Kling. The heaviest category by far. One short AI-generated video can consume approximately 1 kWh of electricity and emit around 400–500 grams of CO₂, comparable to charging your phone 40 times over.

Where the Server Lives Changes Everything

Same Query, But Different Carbon, Depending on Where the Server Location Is

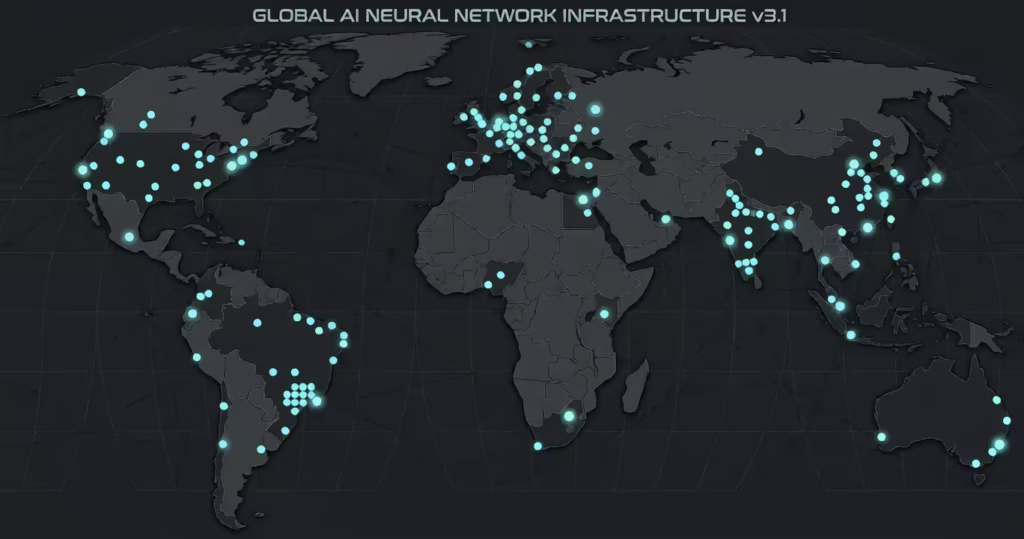

The AI carbon footprint cost of your ChatGPT query has almost nothing to do with you. But everything has to do with where the data center processing is and where its electricity is coming from.

The AI model is the same. The computation is the same. But if that server runs on Icelandic geothermal power versus Estonian shale oil, the AI carbon footprint output of the same query differs by roughly 30 times. Same prompt, but thirty times the emissions. That’s not a minor variable; that’s the huge difference.

| Country / Region | Grid Carbon Intensity | What It Means for AI Queries |

|---|---|---|

| Iceland | Very Low (geothermal) | One of the cleanest places on Earth to run AI workloads |

| France | Low (nuclear dominant) | Among Europe’s lowest-carbon AI query destinations |

| Canada (Quebec) | Very Low (hydroelectric) | 30x cleaner than Estonia for identical AI workloads |

| USA (national average) | Moderate | Varies enormously, Virginia data centers vs. the Pacific Northwest |

| UK / Germany | Low–Moderate | Improving steadily as renewables grow |

| India | High (coal-dominant) | Among the highest per-query carbon for AI users globally |

| Estonia | Very High (shale oil) | Up to 30x more carbon footprint of AI than Quebec for the same AI task |

| China | Mixed | Large AI compute base; coal-heavy but solar growing fast |

Now, let’s talk about India specifically, because this angle is almost absent from Western AI coverage, and it matters.

India has one of the fastest-growing AI user bases in the world because of its population. Hundreds of millions of people use ChatGPT, Gemini, and image generation tools daily.

But India’s electricity grid still runs heavily on coal, roughly 70% thermal generation as of recent figures. That means every AI query processed through infrastructure drawing from Indian power networks carries a meaningfully higher carbon cost.

But this isn’t an argument to stop using AI. That would be both impractical and, honestly, a bit patronising. But it is context that should shape where companies like Google, Microsoft, and emerging Indian AI firms like Sarvam AI choose to build their data centers and how aggressively they commit to renewable sourcing when they do.

The scale of this infrastructure push is hard to overstate, OpenAI’s Stargate data center project alone represents one of the largest single commitments to AI compute in history, and where those facilities are built determines their carbon profile entirely.

India is simultaneously one of the countries that is most exposed to climate risk, extreme heat, monsoon disruption, and water level stress, and one of the largest contributors to AI’s carbon footprint through its grid mix. This problem deserves more attention than it gets.

The Transparency Problem

Why Real Numbers of Carbon Footprint of AI Are So Hard to Find, and Who’s Responsible for That?

You might have noticed something while reading this article: a lot of the figures above come with qualifiers. Like Estimates, based on third-party research, Companies don’t disclose this.

That’s not sloppy journalism. That’s the reality of this topic.

In a 2024 assessment of 13 major AI companies, 10 disclosed none of the three key environmental metrics that researchers asked for: energy usage, carbon emissions, and water consumption.

Zero out of three. Only IBM, Writer, and Meta provided meaningful environmental data at all.

These are some of the most powerful, well-resourced companies in the world. And they have the data, they choose not to share it.

And the problem runs deeper than just silence. When companies do talk about their environmental commitments, like net zero by 2030, 100% renewable energy, the language is often carefully constructed to sound better than it is.

Here are a few things that worth understanding:

- “Net zero” pledges typically cover Scope 1 and Scope 2 emissions, direct emissions, and purchased electricity. The compute powering your actual queries (Scope 3) is rarely included in these commitments.

- “100% renewable energy” often means Renewable Energy Certificates, essentially paying for renewable energy produced somewhere else on the grid, not literally clean electrons flowing into the server processing your prompt.

- Standardised measurement doesn’t exist yet. There is no agreed industry methodology for calculating AI-related emissions. Researchers use different approaches, which is a big part of why estimates vary so much, and why companies can pick whichever number looks most flattering.

This isn’t unique to AI. The tech industry has a long history of sustainability commitments that look impressive in press releases and dissolve under scrutiny.

But AI is scaling so fast, and its energy demands are growing rapidly, that the lack of transparency is becoming genuinely consequential. Not just for researchers, but for regulators, investors, and anyone trying to make informed choices about the tools they use.

How Can We Reduce AI Carbon Footprint?

Just Practical Advice That Holds Up, No Guilt Feelings Included

Let’s get straight to it before getting into tips: individual actions have limited systemic impact. The real impact here is infrastructure decisions by AI companies, regulatory pressure for carbon footprint of AI emissions transparency, and smarter data center siting policy.

If you use AI a little less, but Microsoft builds three more coal-powered data centers in Virginia, the net result is not in your favour.

But there are genuine choices available to you that make a little real difference, especially if you use AI heavily. Here’s what actually works:

- Use standard models for simple tasks. If you’re asking ChatGPT to fix a sentence or summarise a paragraph, you don’t need reasoning mode. Default GPT-4o costs roughly 50–100 times less per query than a full reasoning session. Most people never need the reasoning mode for everyday use.

- Batch your prompts. Five well-constructed prompts will almost always outperform twenty short, iterative ones, both in output quality and energy cost. Take thirty seconds to think before you type. It’s a better habit regardless of carbon.

- Think twice before generating images or videos. If a text explanation does the job, use text. Image generation costs 10–30x more energy than a text query. Video generation is heavier still. This isn’t about never using these tools; it’s about using them when they genuinely add value, not reflexively.

- If you self-host models, your electricity is your carbon. Running Llama 3 or Mistral locally through something like Ollama or LM Studio? Your footprint is determined entirely by your power source. Switching to renewable electricity(Like Solar) at home drops your AI carbon cost to near-zero, and it’s the single highest-impact individual action available to anyone running local models.

Here’s the summary: you’re not going to fix AI’s carbon footprint by changing your prompting habits.

But you can make meaningfully lower-impact choices without sacrificing much and the more people who understand the difference between a text query and a reasoning session, the harder it becomes for companies to keep scaling recklessly without scrutiny.

Conclusion

The Honest Takeaway is Complicated, But It’s Not Unknowable

AI Carbon footprint is a range, shaped by which model you use, what you ask it to do, where the servers live, and whether the company powering it is being straight with you about any of that.

The GPT-3 training stat that launched a thousand headlines is real, but it’s also the least relevant number for understanding what AI’s environmental cost looks like.

The more useful frame is inference, which is now the dominant cost; reasoning models are a quiet multiplier that most users don’t know they’re activating, and location determines more about your query’s AI carbon emissions footprint than almost anything else, including which brand you chose.

None of this makes AI a unique villain. Other industries scale recklessly and hide their numbers, too. But AI is moving faster than almost anything we’ve built before, and that speed makes transparency more urgent, not less.

The other side of this story where AI is being used to actually cut emissions through wildfire detection, methane tracking, and smarter energy grids, is covered in our guide to green AI and how artificial intelligence is fighting climate change.

Google’s response to the energy problem is telling, Sundar Pichai has floated the idea of space-based solar data centers as a long-term fix. The fact that one of the world’s most powerful companies is looking to orbit for a solution tells you everything about how serious the ground-level problem actually is.

If you want to understand the full picture, water, land, hardware manufacturing, and how AI’s environmental footprint fits together as a system, we covered all of it in our guide to the environmental impact of AI.

FAQs

1. What is the carbon footprint of AI?

AI’s carbon footprint varies by model, task, and location. Research estimates that at current growth rates, AI could emit 24 to 44 million metric tons of CO₂ annually by 2030, equivalent to adding 5 to 10 million extra cars to US roads. Training GPT-3 alone produced over 552 tonnes of CO₂, and inference now accounts for 60–70% of total AI energy use globally.

2. How much CO₂ does ChatGPT produce per query?

A standard ChatGPT query produces approximately 0.3 to 1.0 grams of CO₂ equivalent. Using reasoning modes like o1 or o3 can push that figure 50 to 100 times higher. These are third-party estimates, OpenAI does not publish official per-query emissions data.

3. How much CO₂ does an AI image produce?

Generating one AI image produces roughly 2 to 10 grams of CO₂, about 10 to 30 times more than a text query. AI video generation is heavier still, with one short generated clip consuming approximately 1 kWh of electricity and emitting around 400 to 500 grams of CO₂.

4. Is AI damaging the environment?

Yes, though it’s not straightforward. AI data centers consume large amounts of electricity, much still sourced from fossil fuels, and require significant water for cooling.

5. Does AI use a lot of electricity?

Yes. AI-specific servers are estimated to have consumed between 53 and 76 terawatt-hours globally, enough to power over 7 million US homes for a year. A single ChatGPT query uses roughly 3 to 10 times more electricity than a Google search, and global data center electricity consumption is expected to double by 2030.

6. Is AI actually bad for the environment?

Yes, but it depends. AI on renewable energy, using efficient models, for purposeful tasks, carries a small footprint. AI on coal-heavy grids, using reasoning models for simple tasks, at massive scale, is a real problem. The bigger concern is that AI is growing faster than clean energy infrastructure, and in a 2024 assessment, 10 of 13 major AI companies disclosed none of their key environmental metrics.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Environmental Impact of AI: Real Impact on Water, Air, & Energy