Anthropic Accidentally Leaks ‘Claude Mythos’ 🕵️♂️

The AI industry just experienced a massive, accidental reveal. Anthropic reportedly leaked draft blog posts detailing a brand new, unreleased AI model tier called “Claude Mythos” (also referred to under the codename Capybara) after the documents were discovered in a publicly accessible cache.

Here is what the leaked documents revealed about the new frontier model:

- A New Tier: Mythos/Capybara is positioned as an entirely new tier above the current flagship Opus models, with the company internally describing it as “the most capable we’ve built to date.”

- Massive Performance: The model reportedly achieves dramatically higher scores on tests of software coding, academic reasoning, and cybersecurity compared to Opus 4.6.

- The Danger: Anthropic’s own drafts warn that Mythos carries “unprecedented cybersecurity risks,” noting that its ability to find and exploit software weaknesses is far ahead of any other model.

- The Rollout: Because of these severe safety concerns and the incredibly high compute costs required to run it, Anthropic is expected to restrict the initial release solely to a small group of cybersecurity experts for defensive testing.

Why it matters: This leak confirms that the frontier labs are building models that are becoming too dangerous to release to the general public. Anthropic’s own admission that Mythos could spark a wave of exploits that outpace human defenders proves that the era of simply dropping the most powerful model onto a public web interface is ending; the most advanced AI is now being treated like classified weaponry.

UrviumAI Take: The gap between public AI and private AI is widening. Stop assuming that the AI models available on consumer web interfaces (like ChatGPT or Claude.ai) represent the absolute bleeding edge of the technology. As the capabilities of models like Mythos cross the threshold into active cybersecurity threats, labs will increasingly hoard their best models behind closed doors, releasing them only to governments and elite enterprise partners.

Meta’s TRIBE v2 Simulates Brain Scans with AI 🧠

The expensive, time-consuming bottleneck of neuroscience research is being digitized. Meta has open-sourced TRIBE v2, an advanced AI foundation model that successfully simulates human brain activity and often outperforms real-world fMRI scans.

Here is how Meta is revolutionizing “in-silico” brain research:

- Massive Scale: Unlike its predecessor which used just 4 volunteers, TRIBE v2 was trained on over 1,000 hours of fMRI data from 700+ subjects, expanding its mapping from 1,000 to 70,000 distinct brain regions.

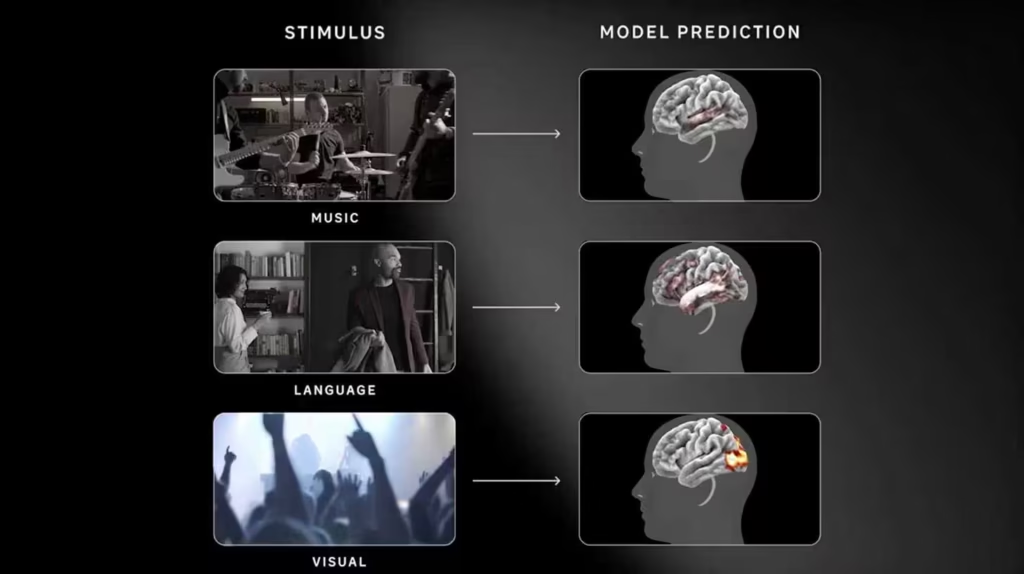

- Tri-Modal Prediction: The model successfully predicts high-resolution neural responses across three distinct stimuli: vision (video), hearing (audio), and language (text).

- Outperforming Reality: TRIBE v2’s synthetic predictions matched population-level brain activity better than many actual human scans, bypassing the physical noise (heartbeats, movement) that usually clouds real fMRI machines.

- Open Source: Meta released the code, weights, and a live demo, allowing global researchers to run virtual brain experiments in seconds without needing a physical scanner.

Why it matters: For decades, testing a neurological hypothesis required recruiting volunteers, paying for expensive scanner time, and dealing with messy biological data. TRIBE v2 effectively acts as a digital twin for the human brain. By allowing researchers to run virtual neuroscience experiments in software, Meta is dramatically accelerating our understanding of human cognition and laying the groundwork for advanced brain-computer interfaces.

UrviumAI Take: Digital twins are moving from machines to biology. The open-sourcing of models like TRIBE v2 means that deep biological research is no longer restricted to massively funded university labs. If you work in health tech, software design, or accessibility, you can now use these models to accurately simulate how a human brain will react to your visual or audio stimuli, allowing for unprecedented personalization in product development.

Anthropic Wins Injunction Against Pentagon Blacklisting ⚖️

The legal war between Silicon Valley AI safety and the U.S. military just saw its first major judicial intervention. A federal judge in California has granted Anthropic a preliminary injunction, temporarily halting the Pentagon from officially designating the AI startup as a national security threat.

Here is the breakdown of the court’s decisive ruling:

- The Injunction: U.S. District Judge Rita Lin blocked the implementation of the Pentagon’s March 3 letter that officially branded Anthropic a “supply chain risk” and halted the enforcement of President Trump’s February 27 directive to phase out the company’s technology.

- The Judge’s Rebuke: In a scathing 43-page ruling, Judge Lin called the Pentagon’s actions “Orwellian” and stated that the supply chain risk designation was “likely both contrary to law and arbitrary and capricious.”

- First Amendment Retaliation: The judge noted that the Department of Defense’s own records showed they punished Anthropic primarily because of the company’s “hostile manner through the press,” which she categorized as “classic illegal First Amendment retaliation.”

- The Impact: While the injunction gives the government a week to appeal, it temporarily restores Anthropic’s ability to contract with federal agencies and shields the company from the devastating reputational damage of being labeled a saboteur.

Why it matters: This is a monumental early victory for Anthropic. The Pentagon attempted to use its ultimate trump card the “national security risk” label, usually reserved for foreign adversaries like Huawei, to punish an American company over a contract dispute and public disagreements regarding AI safety. The judge’s ruling firmly rejects the government’s attempt to weaponize national security statutes to crush domestic corporate dissent.

UrviumAI Take: The government cannot use national security as a blank check for retaliation. If you are an enterprise tech leader, this ruling is a massive relief. It sets a legal precedent that federal agencies cannot arbitrarily blacklist your company and destroy your business simply because you refuse to hand over unconditional access to your proprietary technology or speak out against aggressive government contracting demands.

Last AI News: Reddit’s Bot Rules, ARC-AGI-3 Stumps Models & Google’s TurboQuant

Other AI News Today:

- English Wikipedia has officially banned the use of AI large language models to write or rewrite article content, allowing only narrow exceptions for copyediting and translation.

- Google launched a massive wave of AI updates, including Gemini 3.1 Flash Live, seamless memory import tools, and the groundbreaking TurboQuant compression algorithm.

- OpenAI has surpassed a $100 million annualized revenue run rate from its ChatGPT ads pilot just six weeks after launch, signaling a massive pivot in monetization.

- OpenAI has indefinitely shelved plans to introduce an “adult mode” into ChatGPT, shifting focus away from side projects to prioritize core enterprise features.

- Apple plans to open the Siri interface in iOS 27 to allow users to route voice queries to rival AI models, ending OpenAI’s exclusive integration deal.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Altman vs. Amodei Rivalry, Leaked Pentagon Slacks, xAI Exodus