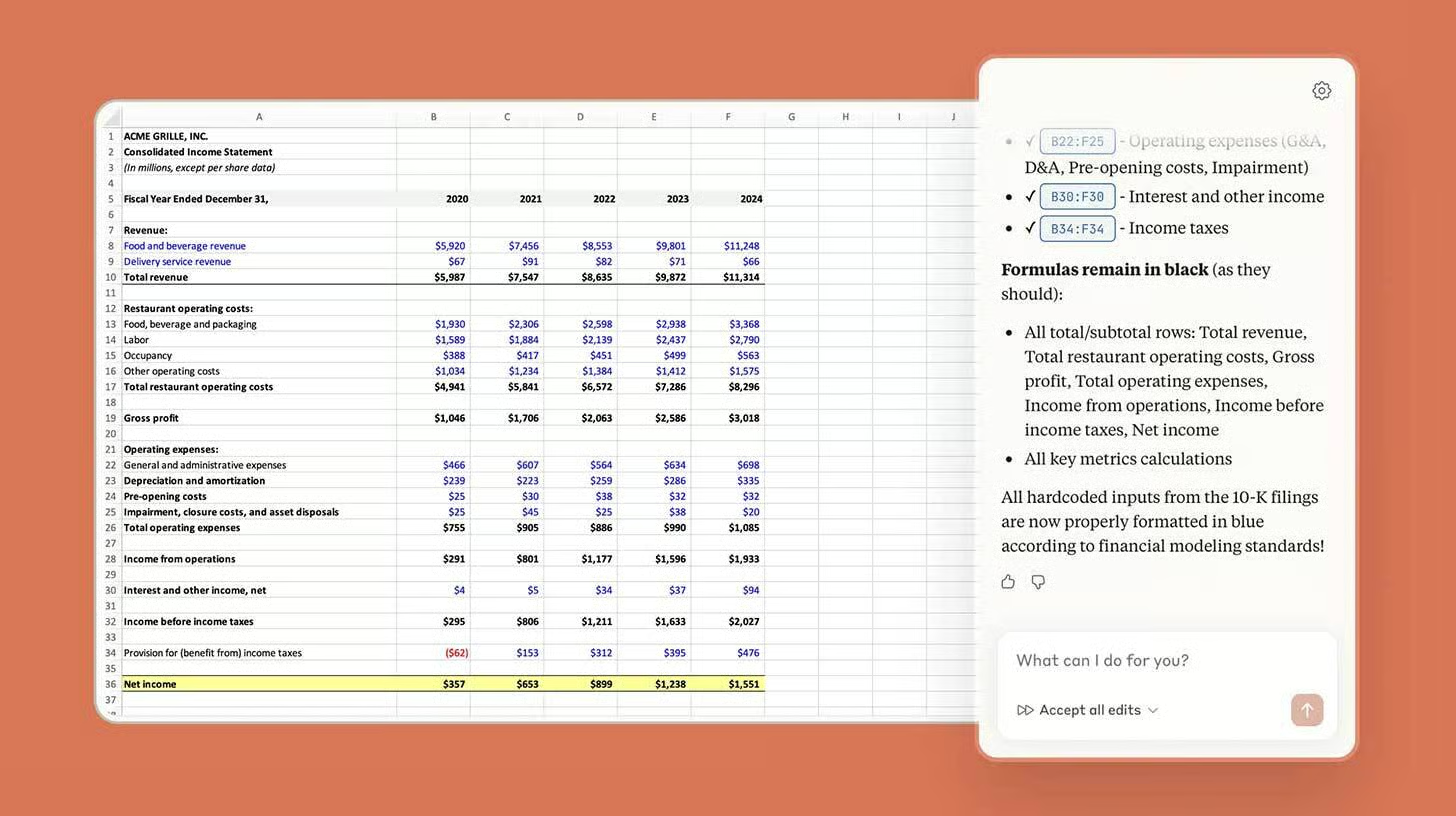

Anthropic Brings Claude Directly into Excel

The Anthropic just released Claude for Excel in beta, letting users interact with the AI assistant through a sidebar that can read, analyze, and modify spreadsheets—and they\’re bringing serious firepower to the financial industry.

The details:

- The integration allows Claude to explain spreadsheets, fix formulas, populate templates with new data, or build new workbooks from scratch

- Seven new connectors link Claude to financial platforms like Aiera for earnings call transcripts, LSEG for live market data, and Moody’s for credit ratings

- Anthropic also debuted several new finance-specific Agent Skills, including building cash flow models, company analysis, coverage reports, and more

- Claude for Excel and the new Skills are rolling out as a research preview to Max, Enterprise, and Teams subscribers before a broader release

Why it matters: AI for spreadsheets has been a hot topic over the last few months, with ChatGPT, Copilot, and now Claude all pushing solutions. While the others have been more agent-focused, Claude’s native Excel access and financial connectors may bring new reliability to an area still waiting for a true AI breakthrough.

The finance-specific Skills give Claude an immediate edge for Wall Street analysts, investment bankers, and financial professionals who live in spreadsheets. If Claude can genuinely automate complex financial modelling and pull live market data seamlessly, it could become indispensable for the finance industry. However, the research preview phase will be critical—Excel users are notoriously particular about reliability, and any errors could quickly undermine adoption.

OpenAI Offers Free ChatGPT Go to All Indians

OpenAI is giving users in India a big Diwali-style gift: one year of free access to ChatGPT Go, starting November 4. The offer comes just before the company’s first-ever DevDay Exchange event in Bengaluru, showing how serious OpenAI is about its growing Indian user base.

The details:

- From November 4, anyone in India, whether new or existing ChatGPT Go users, can enjoy a free one-year subscription to ChatGPT Go

- Existing subscribers will automatically get an extra 12 months added to their plan

- The company announced the new plan today (October 28) in response to the free plans from Google and Perplexity

- ChatGPT Go includes higher message limits, image generation, file uploads—currently priced at ₹399/month (₹4,788 annual value)

- New users can sign up at chat.openai.com or via the mobile app; existing users get automatic extensions

Why it matters: OpenAI just declared India its second-largest ChatGPT market globally, and this free offer proves they\’re willing to invest heavily to maintain that position. The timing is strategic—Google made its AI Pro membership (₹19,500 annually) free for students, while Perplexity partnered with Airtel for free premium access. OpenAI\’s response? Give it to EVERYONE for free. This is an AI land grab.

With millions of Indian students, developers, and businesses getting premium AI access for free, OpenAI is building long-term loyalty before competitors can establish footholds. The DevDay event in Bengaluru signals India isn’t just a market—it’s becoming central to OpenAI’s global strategy. Free access also democratizes AI across tier-2 and tier-3 cities, potentially sparking innovation in smaller towns. But the economics are interesting: OpenAI is essentially giving away ₹4,788 per user to compete. That’s expensive customer acquisition, betting on future monetization through upgrades, enterprise sales, and ecosystem lock-in.

Qualcomm Shares Soar 20% on New AI Chip Launch

Shares in US chipmaker Qualcomm skyrocketed on Monday after the company unveiled two new artificial intelligence processors designed for data centres, marking a major push into a market dominated by rivals Nvidia and AMD.

The details:

- Qualcomm announced the AI200 and AI250 chips optimized for AI inference—the process of running already-trained AI models to generate responses

- The AI200 (commercial release 2026) offers 768GB of memory per card and uses direct liquid cooling to manage heat in densely packed server racks, consuming up to 160 kilowatts

- The AI250 (expected 2027) promises 10x more effective memory bandwidth than current products while consuming less power

- Shares in Qualcomm were up as much as 20% in Monday trading on Wall Street

- The San Diego-based company, best known for smartphone and PC processors, is venturing into new data centre territory

Why it matters: Qualcomm just crashed Nvidia’s AI chip party—and Wall Street loved it. The 20% stock surge shows investors are hungry for ANY credible alternative to Nvidia’s near-monopoly on AI chips. But here\’s the catch: Qualcomm is focusing on inference (running AI models) rather than training (building them), which is smart positioning against Nvidia’s training dominance but also a narrower market.

The AI200’s 768GB memory and liquid cooling for 160-kilowatt racks show Qualcomm understands data center requirements. The AI250’s claimed 10x memory bandwidth improvement (if delivered) could be game-changing for large language model deployments. Timing matters: 2026-2027 releases mean Qualcomm is playing catch-up while Nvidia and AMD continue iterating. There’s also the AI bubble question—AMD’s shares rose 35% just on an OpenAI partnership announcement, reminiscent of dot-com era hype.

But the massive infrastructure buildout for AI isn’t slowing down, and data centers need alternatives to avoid single-vendor lock-in. Qualcomm’s smartphone/PC chip expertise gives them credibility, but succeeding in data centers requires different go-to-market strategies, customer relationships, and support infrastructure. If they execute well, Qualcomm could capture meaningful share in the inference market—especially if they undercut Nvidia/AMD on price while delivering comparable

Also read about: Microsoft’s Copilot Gets ‘Mico’ Personality plus OpenAI, Netflix

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.