When we talk about the environmental impact of AI, we usually point to one thing: energy. Sometimes carbon. Occasionally water. Almost never all of them together.

AI doesn’t affect the environment in one straight line. It behaves more like a system: messy, layered, and interconnected. Pull one thread, and a few others move with it.

AI and Environment Footprint as a System

When we type a prompt into ChatGPT or ask an image tool like Nano Banana to generate something flashy, it feels weightless, just text on a screen.

But behind that simple action sits a long physical chain: electricity flowing through servers, cooling systems pulling heat away, water evaporating or circulating, hardware wearing down, and minerals that were mined years ago to make the chips even possible.

This growing physical footprint is closely tied to projects like OpenAI’s Stargate infrastructure push, which shows how large-scale AI systems now depend on massive real-world facilities.

I think instead of asking, “Is AI bad for the environment?” the better question is:

Where does AI impact the environment, and how often does it do so?

Now we break AI’s environmental impact into a few overlapping layers:

- Energy: the electricity needed to train and run models

- Air: emissions created when that electricity comes from fossil fuels

- Water: used to cool data centers and produce electricity

- Land: taken up by data centers and supporting infrastructure

- Materials: mined and processed to build AI hardware

None of these operates alone. For example, a data center powered by renewable energy may cut emissions, but still strain local water supplies. Another may use little water, but rely heavily on coal-powered grids. The trade-offs are real, and they vary by region.

Energy: AI Needs So Much Power

If AI had a heartbeat, it would be electricity.

Everything AI does like learning, answering, and generating, depends on power. A lot of it. And this isn’t a side effect or poor design choice. It’s baked into how modern AI works.

Here’s the thing we miss: AI doesn’t just run once and stop. It keeps running. All the time.

AI Energy Consumption

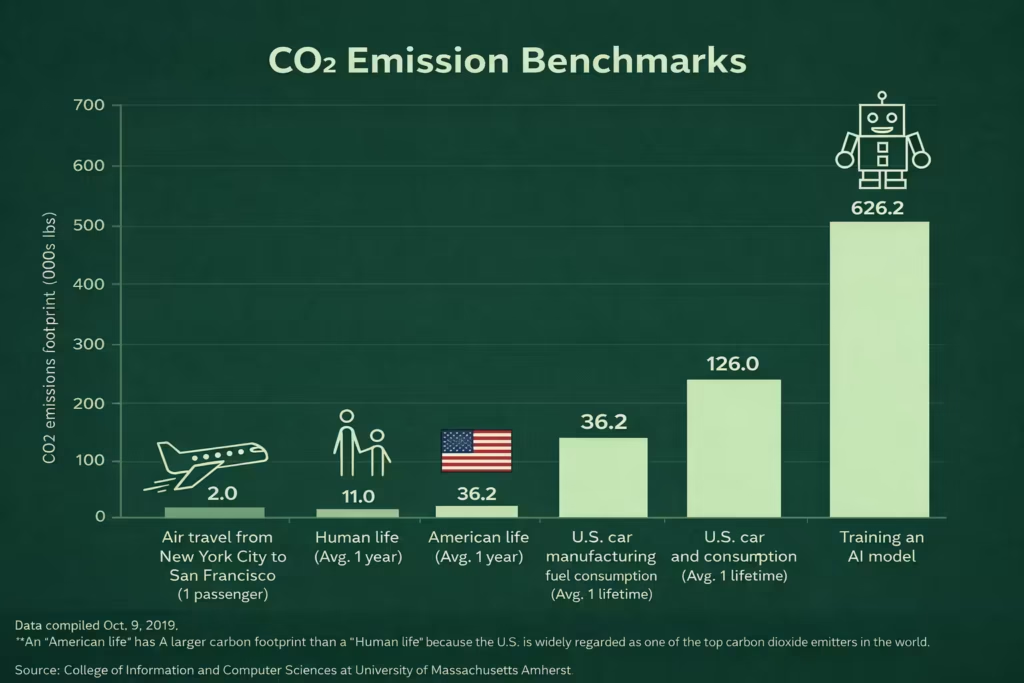

Modern AI models, especially large ones, learn by processing enormous amounts of data over and over again. This phase is called training. During training, thousands of specialized chips (mostly Nvidia GPUs) work in parallel, performing trillions of calculations every second.

That dependence on high-performance chips has strengthened companies like Nvidia, whose role in powering modern AI systems is explained in Nvidia’s massive AI chip deals.

To put this into perspective:

- Training a single large language model can consume millions of kilowatt-hours of electricity

- Training a model like GPT-3 used over 1,200 megawatt-hours of electricity, roughly what hundreds of average U.S. homes use in a year

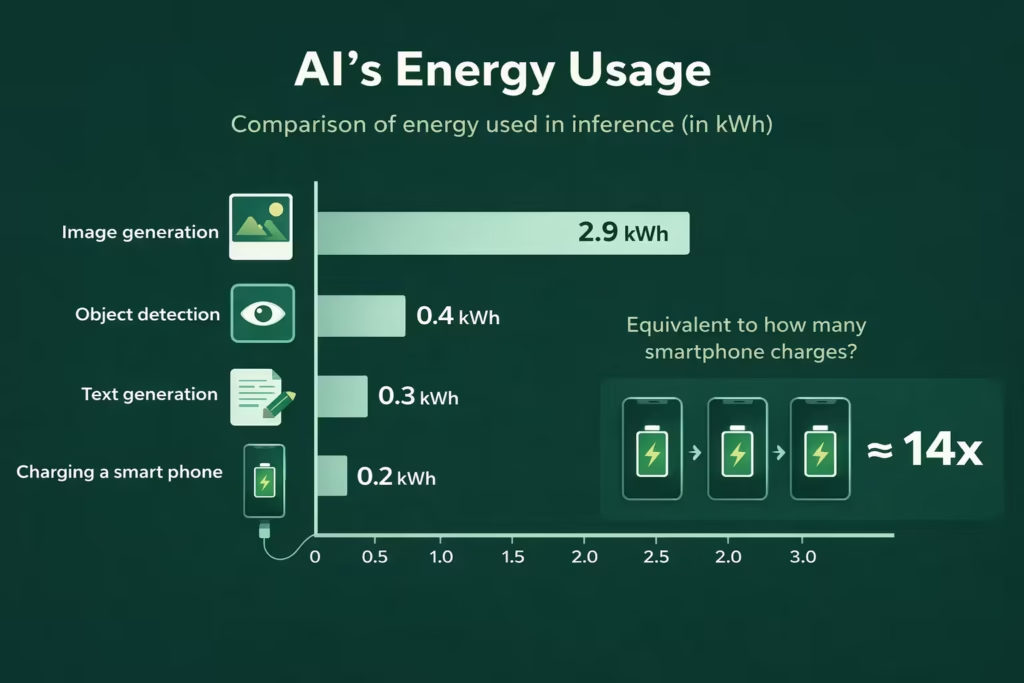

And that’s just training. Once a model is deployed, it enters the inference phase, answering questions, generating images, and powering search results. Each request uses a small amount of energy. But scale changes the Environmental Impact of AI.

When millions, sometimes billions, of people use AI tools daily, those “tiny” energy costs stack up fast.

Always On, Always Running

Traditional software waits for you. AI doesn’t.

Many AI systems are designed to be:

- Always available

- Low-latency (responses in milliseconds)

- Globally accessible

That means data centers running 24/7, even when demand dips. Servers don’t nap. Cooling systems don’t slow down just because it’s midnight somewhere.

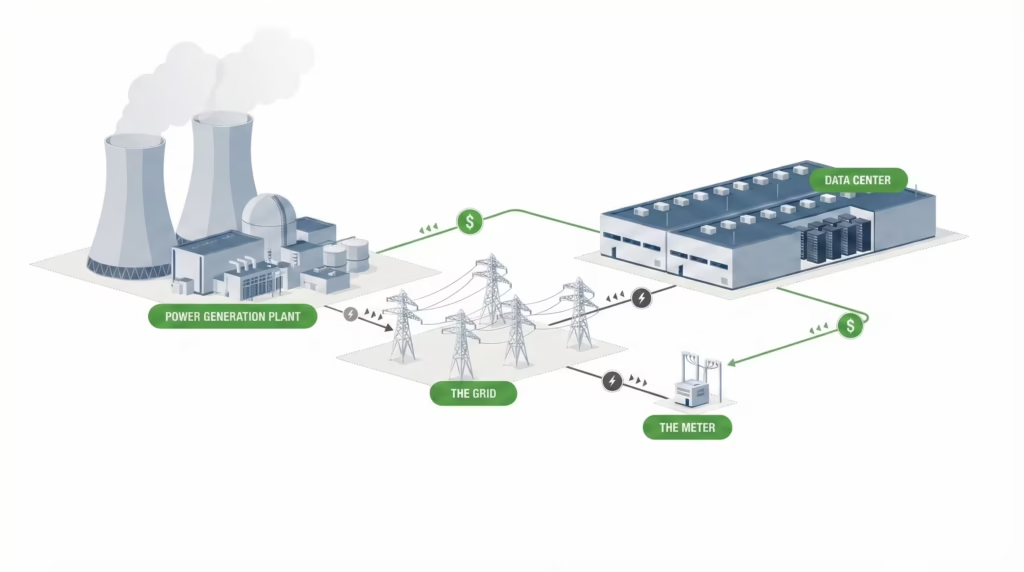

From Power Demand to Grid Pressure

In the United States, data centers already account for about 2–3% of total electricity consumption. With artificial intelligence growth, some projections suggest this could double by the end of the decade if trends continue.

The International Energy Agency reports that global data center electricity demand is now comparable to that of entire mid-sized countries.

Utilities in regions with heavy data center construction, like Virginia, Texas, and parts of the Midwest, are now planning grid upgrades largely because of AI-driven demand. Similar pressures are emerging in Ireland, the Netherlands, and parts of Asia.

This creates a quiet competition:

- Homes need electricity

- Factories need electricity

- Cities need electricity

- And now, AI needs a lot of electricity too

When supply doesn’t meet demand, grids lean on whatever sources are available. That means fossil fuels.

Also Read: Our daily AI news and updates here.

Air: AI Turns Electricity Into Emissions

Artificial intelligence doesn’t care whether electricity comes from wind, solar, gas, or coal. They just need it to be reliable and constant.

Globally, around 60% of electricity still comes from fossil fuels, according to international energy agencies. That means a large share of the power feeding AI data centers still produces carbon dioxide and other greenhouse gases.

So when an AI model runs, whether it’s answering a question or generating an image, it is indirectly responsible for:

- Carbon dioxide (CO₂)

- Nitrogen oxides

- Particulate pollution

AI Carbon Footprint: It Depends Where the Server Lives

The carbon footprint of AI varies by location.

- An AI query processed in a data center powered mostly by renewables can have a relatively low emissions impact.

- The same query, processed in a region dependent on coal or natural gas, can produce several times more emissions.

This is why researchers often avoid giving a single number for “AI’s carbon footprint.” There isn’t one. It changes by geography, time of day, and grid mix.

Training large AI models can produce hundreds of tons of CO₂ emissions, depending on energy sources. That’s comparable to the lifetime emissions of dozens of cars, sometimes more. Again, that’s just training, not years of everyday use.

Inference: The Quiet Emissions Multiplier

Every AI response triggers:

- Compute operations

- Power draw

- Heat generation

- Cooling activity

One request doesn’t matter much. Ten million per day does.

As AI tools get integrated into search engines, office software, customer support, and devices, the number of daily AI-powered interactions keeps climbing. That’s why some researchers warn that AI-related emissions could grow faster than efficiency gains reduce them, a classic rebound effect.

Backup Power and the “Hidden Emissions” Problem

Large data centers rely on diesel generators as backup power. They don’t run often, but when they do, during outages or grid instability, they emit concentrated bursts of pollution.

Local communities near data centers have raised concerns about:

- Air quality during testing and emergency use

- Increased exposure to diesel exhaust

- Lack of transparency around emissions reporting

These emissions don’t always show up in neat global carbon statistics, but they matter locally.

How is AI bad for the environment?

AI can increase emissions. But it can also shift emissions, depending on how it’s deployed. A well-placed, efficiently powered data center can be cleaner than thousands of smaller.

The real issue isn’t that AI exists. It’s that AI is scaling faster than clean energy infrastructure in many regions.

AI Water Consumption: The Hidden Cost Behind AI Cooling

AI runs on servers. Servers generate heat, a lot.

If that heat isn’t removed quickly, the system’s gonna fail. Chips degrade. Entire data centers can shut down. So cooling isn’t optional, it’s survival.

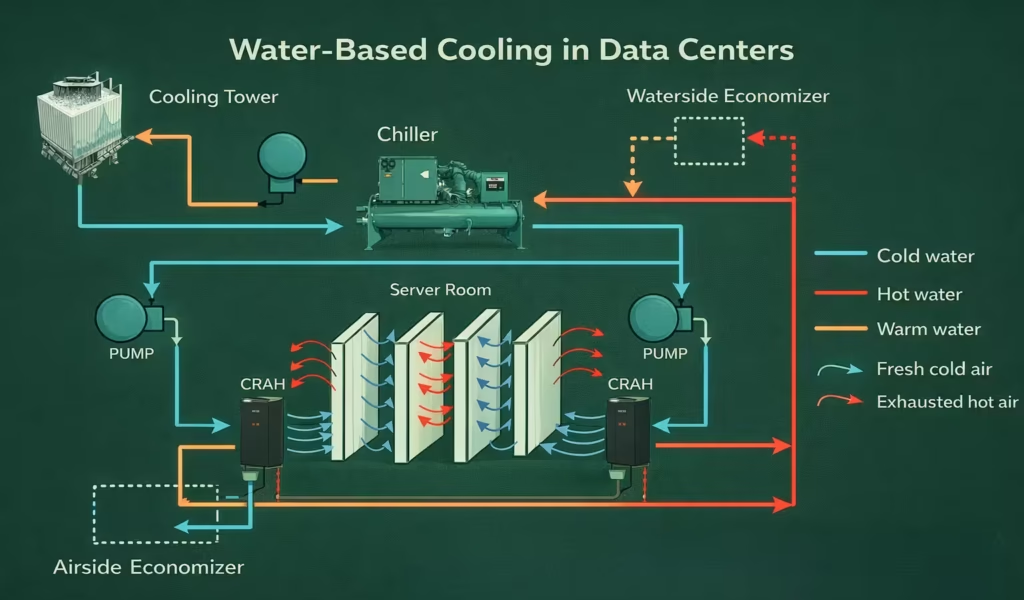

There are two main ways data centers stay cool:

- Air-based cooling, which still uses electricity (ACs, fans)

- Water-based cooling, which is far more efficient for dense AI workloads

How Much Water Are We Talking About?

Exact numbers are hard to know because companies rarely disclose them fully. But independent researchers have provided estimates that help us understand scale.

Some studies suggest that:

- Training a large AI model can consume millions of liters of water, depending on location and cooling method

- A single AI-generated response can indirectly use a few milliliters of water, which sounds tiny until you multiply it by millions of daily users

A data center in a cool, wet region may use far less water than one in a hot, dry area. Yet many data centers are built where land and electricity are cheap, not where water is abundant.

The Local Water Problem

In several regions, especially parts of the United States, Europe, and Asia, new AI-focused data centers are being built massively in water-stressed areas. Local governments often approve them because they bring jobs and investment. But residents pay a price.

Communities have raised concerns about:

- Strain on local water supplies

- Increased competition between data centers and agriculture

- Lack of transparency about water usage

In drought-prone regions, even modest increases in industrial water demand can matter. And unlike energy, water can’t be moved easily across long distances.

Indirect Water Use: The Invisible Part

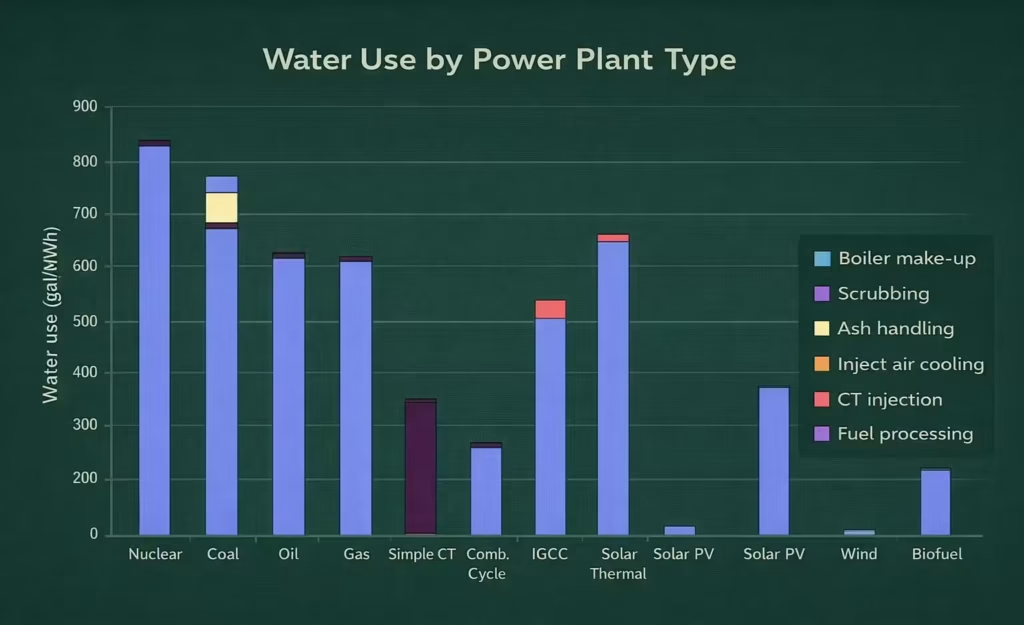

Even if a data center claims it uses “minimal water,” it may still rely on electricity from power plants that consume large amounts of water for cooling, especially coal, gas, and nuclear plants.

This is why researchers talk about “water footprint”, not just water consumption on-site. It includes everything required to keep the system running, end to end.

Efficiency Helps But Doesn’t Solve Everything

To be fair, the industry isn’t ignoring this.

Many operators are:

- Improving cooling efficiency

- Recycling water

- Using non-potable or reclaimed water

These steps help. They really do. But they don’t eliminate the core issue: AI demand is growing faster than water-saving improvements.

Land: Data Centers, Space, and Local Ecosystems

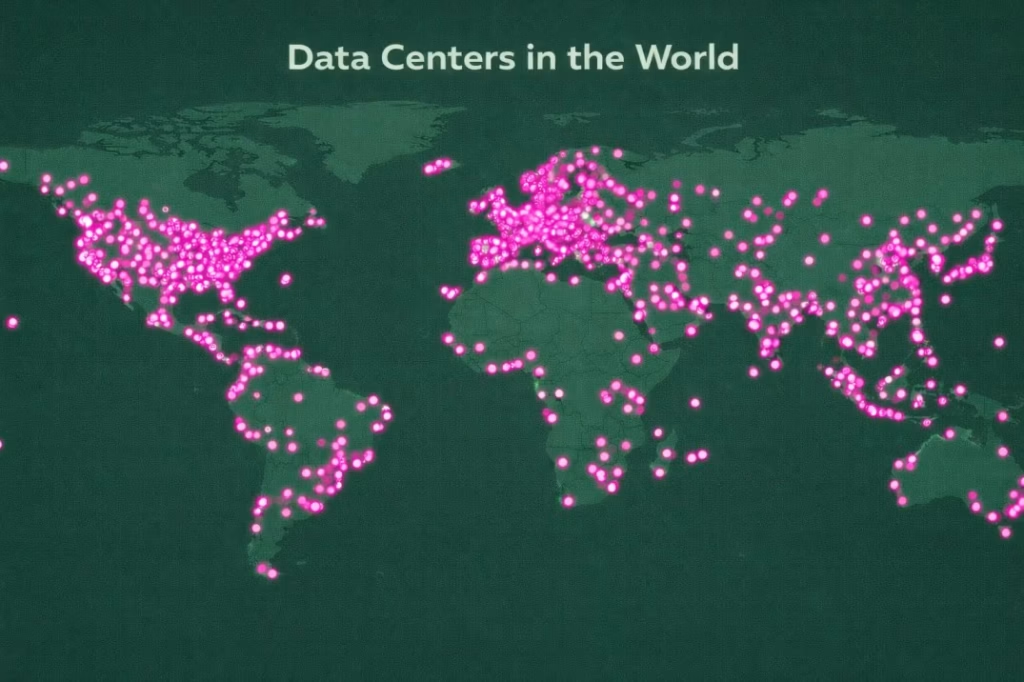

Behind every AI system sits a growing network of data centers, massive physical facilities that need land, access roads, power lines, water connections, and security buffers.

Modern AI-focused data centers are often described as “warehouses,” but that undersells it. These facilities can span dozens of acres, with multiple buildings packed with servers, cooling equipment, backup generators, and electrical infrastructure.

As demand rises, companies don’t just upgrade existing sites. They build new ones. Fast.

This expansion changes how land is used:

- Farmland becomes industrial space

- Semi-rural areas turn into infrastructure hubs

- Natural habitats get fragmented or removed

The land itself isn’t always “destroyed,” but its function changes permanently.

Local Environmental Effects Add Up

Communities near large data centers often report:

- Increased noise from cooling systems and generators

- Heat release into surrounding areas

- More traffic from construction and maintenance

There’s also something called the urban heat island effect. Large clusters of buildings, servers, and paved surfaces can raise local temperatures slightly. It’s subtle, but in hot climates, every degree matters.

And then there’s biodiversity.

When land is cleared for construction, local ecosystems lose continuity. Plants, insects, and small animals don’t adapt as quickly as digital infrastructure does.

Why Location Choices Matter

Here’s where things get complicated. Data centers are often built where:

- Land is cheap

- Electricity is abundant

- Regulations are favorable

Environmental sensitivity isn’t always the top factor. That’s why you sometimes see large AI facilities placed near:

- Agricultural regions

- Water-scarce zones

- Previously undeveloped land

From a business perspective, this makes sense. From an environmental one, it creates trade-offs that don’t show up in energy or carbon charts.

Land use changes tend to be quiet. There’s no sudden spike in emissions or water usage that grabs headlines. But once land is repurposed, it rarely goes back.

This is why environmental researchers increasingly argue that AI’s land footprint should be evaluated alongside energy and water, not as an afterthought.

Data Centers: The Physical Backbone of Artificial Intelligence

This is where large language models live, where calculations happen, and where every prompt, no matter how casual, eventually ends up. And as AI grows, data centers are changing in ways that make their environmental footprint harder to ignore.

Why AI Data Centers Are Different From Traditional

Older data centers were designed mainly for storage and basic computing, email servers, websites, and databases. AI changes that equation completely.

AI-focused data centers are:

- Compute-dense, packed with high-performance GPUs

- Heat-intensive, generating far more heat per square meter

- Always active, with fewer idle periods

A single rack of AI servers can draw several times more power than a traditional server rack. Multiply that by thousands of racks, and you start to see why AI data centers feel less like digital infrastructure and more like heavy industry.

Cooling, Redundancy, and the Cost of Reliability

AI systems are expected to work instantly and continuously. That expectation shapes how data centers are built.

To avoid downtime, operators add layers of redundancy:

- Multiple power feeds

- Backup generators

- Extra cooling systems

Cooling alone can account for 30–40% of a data center’s total energy use, depending on design and climate. Backup systems, especially diesel generators, sit idle most of the time but still require maintenance, testing, and fuel storage.

Where Data Centers Are Built, and Why That Matters

They cluster where electricity is cheap, land is available, and network connections are strong. That’s why you see dense concentrations in certain regions like Northern Virginia, parts of Texas, Ireland, and Singapore.

When many data centers draw power from the same grid, local infrastructure gets strained. Utilities may build new power plants or delay the retirement of older, dirtier ones to keep up with demand.

So while a single data center might seem manageable, dozens in the same area can reshape local energy planning.

The Transparency Problem

Here’s a quiet frustration among researchers and environmental groups: we don’t see the full picture.

Most companies report high-level sustainability goals. Few release detailed, site-specific data on:

- Energy use

- Water consumption

- Emissions

- Local environmental impacts

Without that data, it’s hard to compare claims with reality or to understand which design choices actually reduce harm.

Efficiency Gains vs. Growth

To be fair, data centers are becoming more efficient.

Metrics like Power Usage Effectiveness (PUE) have improved steadily over the years. Newer facilities use less overhead energy per unit of computing than older ones.

But efficiency gains are being outpaced by demand growth. As AI spreads into search, productivity tools, entertainment, and devices, total computing rises faster than per-unit efficiency improves.

So even “greener” data centers can still lead to a larger overall footprint.

Hardware & Manufacturing: The Impact Before AI Even Runs

Chips were designed. Minerals were mined. Factories ran day and night. Ships crossed oceans. Power was burned long before any server was switched on. Semiconductor production is already recognized as one of the most resource-intensive manufacturing processes, according to the World Economic Forum.

This upstream impact is easy to forget because it’s out of sight. But environmentally, it matters just as much as what happens inside data centers.

AI Chips and GPUs

Modern AI depends on specialized hardware, mostly GPUs and custom accelerators. These chips are incredibly powerful, but they’re also incredibly resource-intensive to make.

Manufacturing advanced semiconductors requires:

- Large amounts of electricity

- Ultra-pure water

- Complex chemical processes

Some estimates suggest that producing a single high-end chip can require thousands of liters of water and significant energy input. Multiply that by the millions of chips needed for global AI infrastructure, and the footprint adds up fast.

Mining the Materials Behind AI

AI hardware relies on materials that don’t come without cost:

- Copper for wiring

- Lithium for batteries

- Rare earth elements for components and magnets

Mining these materials can lead to:

- Land degradation

- Water pollution

- High carbon emissions from extraction and transport

These impacts often fall on regions far removed from the shiny data centers that eventually use the hardware. The environmental cost is outsourced geographically and politically.

Short Lifecycles, Long-Term Waste

Here’s another uncomfortable truth: AI hardware ages quickly.

As models grow larger and more demanding, older chips become inefficient or obsolete. So companies replace servers every few years to stay competitive.

That creates a growing stream of electronic waste.

E-waste is difficult to recycle safely. Some components contain hazardous materials. Others are technically recyclable but rarely processed properly due to cost or lack of infrastructure.

Globally, only a fraction of electronic waste is formally recycled. The rest often ends up in landfills or informal recycling operations, where environmental and health risks are high.

Efficiency vs. Consumption

There’s a pattern here.

AI hardware becomes more efficient with each generation. New chips can do more work per watt. That’s real progress.

But at the same time, demand grows even faster. More models. Bigger models. More applications.

So total resource use keeps rising, even as efficiency improves. It’s the same rebound effect we saw with energy just earlier in the lifecycle.

Why Hardware Manufacturing Matters So Much

Hardware manufacturing is easy to ignore because it doesn’t happen where AI is used. But environmentally, it’s one of the most impactful stages.

Once land is mined, water is polluted, or emissions are released during manufacturing, those effects can’t be undone by cleaner electricity later.

This is why researchers argue that AI’s true environmental impact begins years before deployment, and that’s why focusing only on operational emissions tells an incomplete story.

Is AI Worse Than Other Technologies?

Here’s a question that comes up a lot, usually halfway through conversations like this:

Is AI really worse than everything else we already use? Or are we just noticing it more now?

Honestly, the answer sits somewhere in the middle.

AI vs. Traditional Cloud Computing

At first glance, AI looks like just another cloud workload. After all, cloud services have been around for years. Email, streaming, and online storage all rely on data centers too.

The difference is intensity.

Traditional cloud tasks often involve:

- Storing data

- Moving data

- Serving cached content

AI tasks, especially generative ones, involve constant computation. Every response is freshly calculated. There’s no shortcut.

So while cloud computing uses a lot of energy overall, AI pushes more power through the system per action.

AI vs. Streaming and Social Media

Streaming video at scale uses enormous bandwidth and electricity. No question there. But once a video is encoded and cached, serving it again is relatively efficient.

AI doesn’t benefit from caching in the same way. A new prompt means new math. Even if two people ask similar questions, the system still has to process each one.

That makes AI more like a live service than a broadcast one, and live services always cost more to run.

AI vs. Cryptocurrency

This comparison comes up often, and for good reason.

Cryptocurrency mining, especially proof-of-work systems, became infamous for energy use because the computation itself served no purpose beyond securing the network.

The computation does real work, translation, analysis, prediction, and design. That doesn’t erase the environmental cost, but it changes the value equation.

In other words, AI’s footprint isn’t meaningless. The debate isn’t whether AI does something useful. It’s whether we’re using it carefully enough.

So What Makes AI Feel Different?

Two things stand out:

- Speed of adoption

AI went from niche to mainstream incredibly fast. Infrastructure didn’t have time to adjust gradually. - Depth of integration

AI isn’t one app. It’s becoming a layer across many tools, search, work software, customer support, and creative tools.

That combination makes AI’s environmental impact feel sudden and expansive, even if similar technologies have caused harm in quieter ways before. Usage data from studies, like how millions really use AI shows why this expansion feels faster than earlier tech waves.

So no, AI isn’t uniquely destructive. But it is uniquely fast-growing, and that makes its footprint harder to manage.

Can AI Actually Help the Environment?

AI hasn’t sounded very eco-friendly so far, but the picture is more complicated than that. Artificial intelligence is good at spotting patterns and data humans struggle with, which makes it useful for balancing energy grids with renewables, improving climate models, boosting industrial efficiency, and optimizing logistics to reduce fuel use.

The catch is the rebound effect: when things become cheaper or easier, we tend to use them more, so total impact can still rise even as efficiency improves. That’s why purpose matters, using AI to optimize power systems is very different from using it to generate endless content, even if the infrastructure looks similar.

Final Thoughts – Environmental impact of AI

AI’s environmental impact isn’t a single problem with a single fix, it’s shaped by design choices, business incentives, infrastructure limits, and how people use the technology. What needs to change is clearer transparency from companies about real energy, water, and emissions data, smarter choices about where and how data centers are built, longer and more responsible hardware lifecycles, and greater awareness from users that convenience comes with a cost.

At the same time, AI can reduce emissions and improve efficiency when used deliberately. The truth sits in that tension: AI is neither villain nor savior, but a powerful tool tied to physical limits, and its impact will depend on whether we choose to measure it honestly, design it carefully, and use it with restraint where restraint actually matters.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.