OpenAI Launches GPT-5.3-Codex-Spark on Cerebras Chips ⚡

OpenAI just cheated on Nvidia. In a major hardware milestone, OpenAI has released GPT-5.3-Codex-Spark, a new coding model that runs not on Nvidia GPUs, but on Cerebras wafer-scale chips.

Here are the specs of the speed demon:

- Blazing Speed: Spark generates over 1,000 tokens per second, allowing for near-instant coding completions. It is designed for interactive “tab-complete” tasks rather than long-form reasoning.

- The Trade-off: While faster, Spark trails the full Codex model on complex benchmarks like SWE-Bench. It is a specialist tool for speed, not a generalist for deep thought.

- Hardware Shift: This is OpenAI’s first commercial product powered by non-Nvidia hardware, validating its multi-billion dollar deals with Cerebras, AMD, and Broadcom.

- Availability: The model is rolling out as a research preview to ChatGPT Pro subscribers and select enterprise partners.

Why it matters: This is the beginning of the “Hardware Wars.” By proving it can deploy state-of-the-art models on alternative chips, OpenAI is signaling to Nvidia that it has options. For developers, it means the lag between “thinking” and “coding” just disappeared.

UrviumAI Take: 1,000 tokens/second changes the UX of coding. If you use this model, treat it like a spellchecker, not a contractor. It’s fast enough to fix your syntax as you type, creating a flow state that slower models break.

Google’s “Deep Think” Crushes Reasoning Benchmarks 🧠

Google just reminded everyone who invented the Transformer. While OpenAI and Anthropic battle over coding, Google has quietly released a massive update to Gemini 3 Deep Think, reclaiming the crown for pure reasoning capabilities.

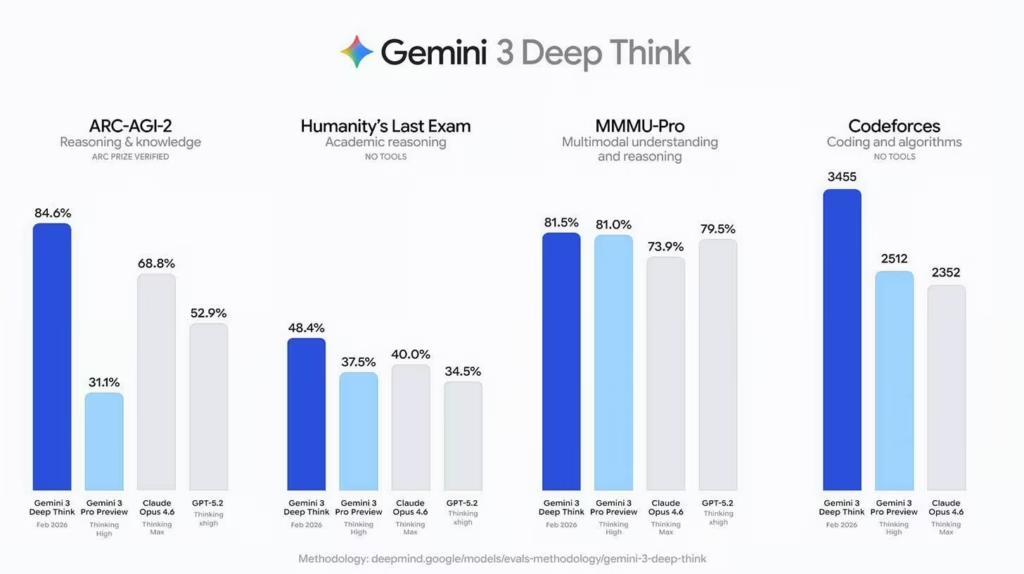

Here is the scoreboard:

- Benchmark Domination: Deep Think scored 84.6% on ARC-AGI-2, completely obliterating Claude Opus 4.6 (68.8%) and GPT-5.2 (52.9%). It also set a new record on the “Humanity’s Last Exam” benchmark.

- Math Wizardry: The model achieved gold-medal standards on the 2025 Physics & Chemistry Olympiads and a Codeforces Elo of 3,455.

- Aletheia: Google also unveiled Aletheia, a specialized math agent powered by the new model that can autonomously solve open research problems and verify proofs.

- Access: The update is live now for Google AI Ultra subscribers.

Why it matters: Google is proving that “reasoning” is its moat. While others focus on speed or creative writing, Deep Think is pushing the boundaries of what AI can solve. For scientists and mathematicians, this is the new state-of-the-art.

UrviumAI Take: The ARC-AGI-2 score is the shocker. If you work in complex logic or scientific research, switch your default model to Gemini Deep Think immediately. A 15-point lead on ARC suggests it fundamentally “understands” novel patterns better than any other model on Earth right now.

Elon Musk Says xAI Exits Were “Forced” 🚪

Elon Musk says he didn’t lose his team; he reorganized them. Addressing the sudden exodus of over 10 engineers and co-founders from xAI, Elon Musk has clarified that the departures were part of an involuntary restructure designed to speed up the company.

Here is Musk’s explanation for the shakeup:

- Push, Not Pull: Musk stated on X that “xAI was reorganized… to improve speed of execution,” implying that co-founders like Tony Wu and Jimmy Ba were let go rather than quitting on their own.

- Scaling Pains: He argued that people suited for the early stages of a startup are often “less suited for the later stages,” comparing the company to a living organism that must evolve.

- The Narrative: This contradicts the polite resignation tweets from departing staff, who hinted at starting new ventures. Musk’s comments frame the loss of talent as a necessary trimming of “dead weight” to prepare for xAI’s massive scaling phase.

- Controversy: The exits come amidst delays to Grok updates and regulatory scrutiny over deepfakes, raising questions about internal stability despite the aggressive spin.

Why it matters: Musk is rewriting the narrative of “brain drain.” By claiming the firings were strategic, he is trying to project strength to investors just after the $1.25T SpaceX merger. However, losing half your founding team in a week is risky, no matter how you spin it.

UrviumAI Take: This is the “Tesla management style” applied to AI research. Expect xAI to become less academic and more product-focused. Musk is clearing out the pure researchers to make room for engineers who will ship products (and moon bases) at breakneck speed.

Last AI News: xAI’s Moon Ambitions, Anthropic’s Sabotage Risk, Google’s AI Checkout

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Pentagon Threatens Anthropic, India AI Summit, & GPT-5.2 Physics Discovery