OpenAI’s ‘Code Red’ After Google Advances 🚨

We haven’t seen this since 2022! OpenAI CEO Sam Altman just told staff that the company is officially in a “code red“ emergency to quickly upgrade ChatGPT. Why this panic? Google’s recent breakthroughs with Gemini 3 and Nano Banana Pro are proving to be a genuine threat.

Here’s what this emergency push means:

- Critical Time: Altman’s memo stated it is “a critical time for ChatGPT” and demanded quick improvements to the consumer experience, including personalization and image generation.

- Fast-Tracked Models: They are fast-tracking a new reasoning model, Shallotpeat (reportedly launching next week and already beating Gemini 3 internally), and a larger model codenamed “Garlic,” targeting an early 2026 release as a potential GPT-5.2 or 5.5.

- The Focus: The “Code Red” means OpenAI is hitting the brakes on lucrative initiatives like advertising and AI agent development to focus all resources on improving the core ChatGPT user experience.

- The Problem: Google issued its own “code red” when ChatGPT launched three years ago. Now the tables have turned. While OpenAI still has a huge user base, its model lead is now seriously threatened by both Google and Anthropic.

Why it matters: This internal emergency shows how competitive the AI race has become. When a market leader has to delay major revenue streams (like agents and ads) to play defense, you know the rival’s product is truly disruptive. The battle for the top consumer AI spot is far from over!

UrviumAI Take: A “Code Red” focused on the consumer experience (personalization, image generation) suggests OpenAI is trying to fend off user migration. Pay close attention to the smaller, fast-tracked Shallotpeat model release next week. If it truly beats Gemini 3 on reasoning, the market’s “vibes” will shift back to OpenAI very quickly.

Amazon Drops AI Agents, Models, Chips at re:Invent 🚀

Amazon just showed up to the AI party with an entire ecosystem! At its annual re:Invent conference, AWS (Amazon Web Services) launched a huge wave of products that cover the entire AI stack—from the chips that train the models to the agents that use them.

Here are the key announcements:

- New Models (Nova 2 Family): They released four new models: Nova 2 Lite (for everyday tasks), Nova 2 Pro (for reasoning), Nova 2 Sonic (for voice), and Nova 2 Omni (multimodal). Amazon claims they offer industry-leading cost-effectiveness.

- Custom Model Building (Nova Forge): This new service lets companies mix their proprietary data with Amazon’s training data to create custom “Novella” models specifically tuned for their business.

- Agent Tools (Nova Act): Nova Act is the new platform for building and managing web-based AI agents. They also released three “frontier agents”—Kiro (a virtual developer), a Security Agent, and a DevOps Agent—that can work autonomously for days.

- Hardware (Trainium 3): Amazon unveiled the Trainium 3 AI chip, which delivers huge leaps in compute and efficiency for faster, cheaper training of generative AI models.

Why it matters: Amazon has often been seen as trailing in core model intelligence, but these re:Invent releases change that narrative. AWS is pushing hard to compete on the full stack, offering the chips, the base models, the custom training tools, and the final autonomous agents all within its trusted enterprise cloud ecosystem.

UrviumAI Take: Amazon’s focus on the full stack is a smart enterprise strategy, especially with the “frontier agents” (Kiro, Security, DevOps). You can research on Nova Forge. The ability for companies to build “Novella” variants by blending their data with Amazon’s is a game-changer for businesses that need highly specialized, accurate AI but lack massive training budgets.

Mistral’s Open-Source Models Built to Run Anywhere 🇫🇷

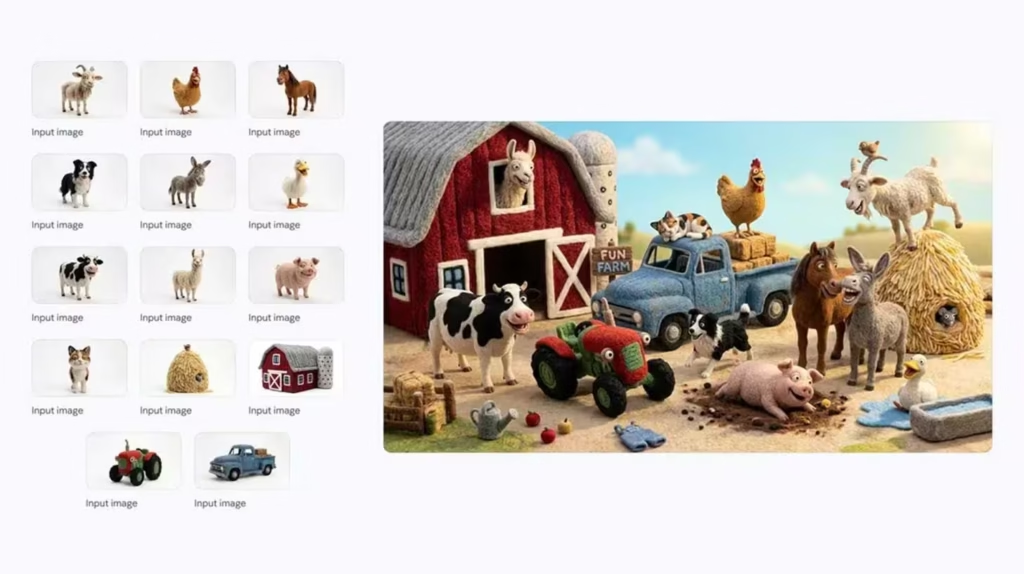

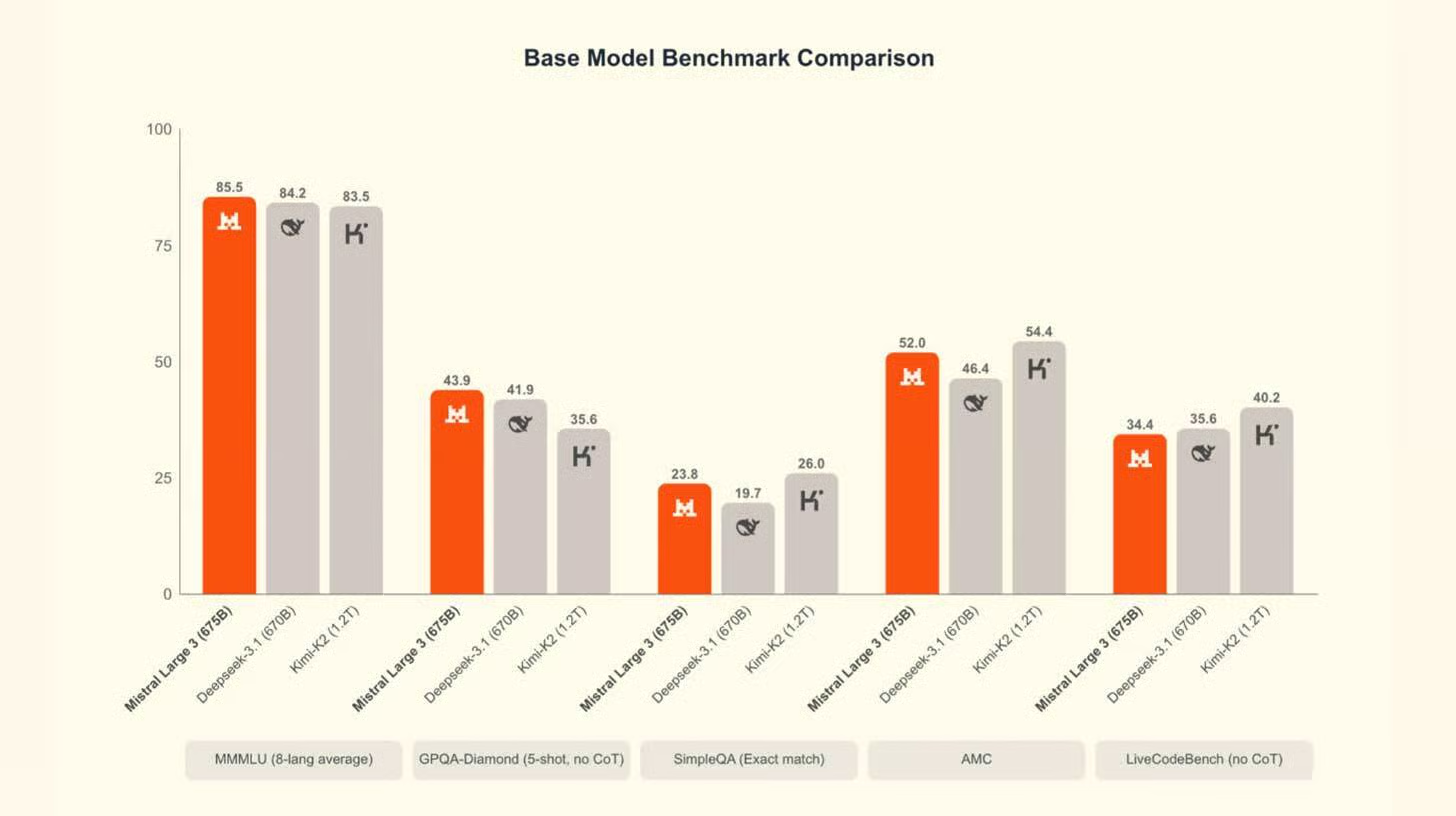

The French AI leader is making a huge push for open access! French startup Mistral just launched its Mistral 3 family of models, including the flagship Large 3 and nine smaller variants. The goal is simple: put powerful, open-source AI into the hands of everyone, no matter what hardware they have.

Here’s the new lineup:

- Flagship Power: Large 3 is Mistral’s top model, competitive with rivals like Qwen3, featuring strong multimodal (text and image) and multilingual capabilities.

- The Ministral Lineup: This includes smaller models (3B, 8B, 14B) in base, instruct, and reasoning variants. These models are designed specifically to run on consumer hardware, like laptops and phones, without needing a constant internet connection.

- Open for Business: All models are released under the generous Apache 2.0 license, which allows for almost any commercial or non-commercial use.

Why it matters: Mistral continues to be the biggest champion for open-source AI outside of the U.S. While the flagship Large 3 is powerful, the real market game-changer is the Ministral series. By making high-quality, multimodal AI models small enough to run on drones, robots, and personal laptops, Mistral is democratizing advanced AI for a massive number of specialized applications.

UrviumAI Take: The focus on running models on “laptops and drones” is exciting. Research the difference in latency (response time) when running a 3B parameter model locally on a consumer laptop versus making an API call to a large cloud model. This will demonstrate the massive speed advantage Mistral is offering for real-time edge applications.

Last AI News: DeepSeek Matches GPT-5, Runway Tops Video, and Kling’s All-in-One Editor

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.