OpenAI & Jony Ive’s First Device is a Smart Speaker 🔈

The iPhone’s legendary designer is building the ultimate AI home hub. Details have finally leaked regarding OpenAI’s highly anticipated entrance into the consumer hardware market. Developed in partnership with former Apple design chief Jony Ive, the company’s first physical product will reportedly be a screenless, AI-powered smart speaker.

Here is what we know about the upcoming device:

- The Hardware: Expected to launch in early 2027, the speaker will feature a built-in camera and microphones for persistent, ambient observation. It is designed to recognize objects in its vicinity and use Face ID-like facial recognition to authorize purchases.

- The Brain: The device will be powered by localized versions of OpenAI’s models, designed to proactively “nudge” users toward their goals (like suggesting an early bedtime if an important meeting is on the calendar).

- The Price: OpenAI is targeting an aggressive price point between $200 and $300, pitting it directly against Apple’s HomePod and Amazon’s Echo Studio.

- The Team: Following OpenAI’s $6.5 billion acquisition of Ive’s startup (io Products), a team of over 200 engineers—including several Apple alumni—is working on the hardware, though reports indicate some internal friction over LoveFrom’s secretive and slow design process.

Why it matters: Generative AI is currently trapped behind phone screens and browser tabs. By launching a dedicated, ambient smart speaker, OpenAI is making a massive bet that the future of computing is “eyes-up” and screenless. However, placing an always-watching, always-listening camera from an AI giant into the living room will be the ultimate test of consumer privacy trust.

Taalas HC1 Chip Bakes AI Directly into Silicon ⚡

Software is eating the world, but silicon is eating the software. An AI hardware startup called Taalas has emerged from stealth to solve the biggest bottleneck in AI: inference speed. Armed with a fresh $169 million in funding, the company just unveiled the HC1, a processor that physically bakes an AI model directly into the hardware.

Here is the breakdown of this radical new approach:

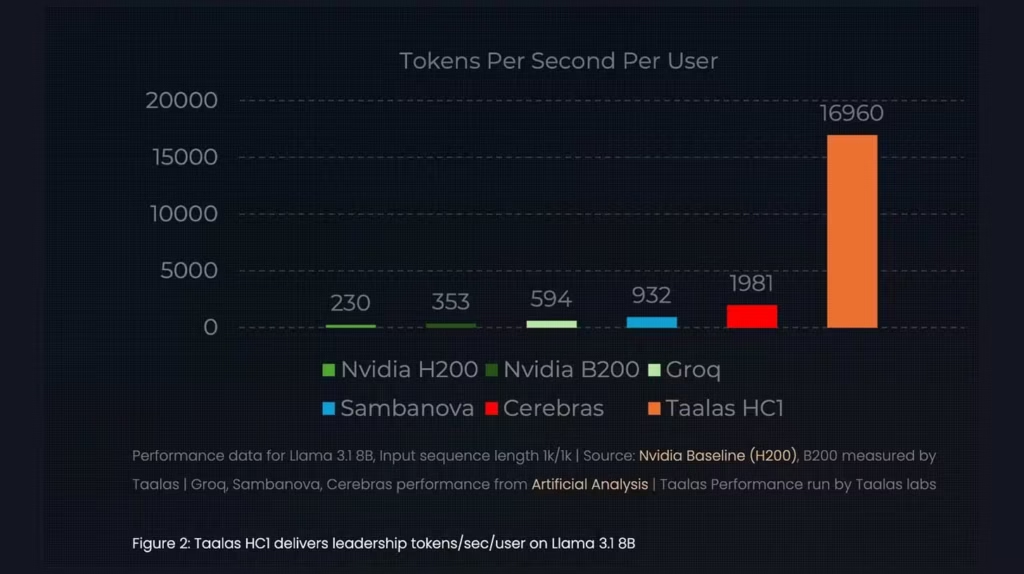

- Hardwired AI: Instead of running an AI model as software on a general-purpose GPU (like an Nvidia H100), Taalas permanently embeds the weights of a specific model—in this case, Meta’s Llama 3.1 8B—directly into the silicon alongside high-speed SRAM memory.

- Mind-Bending Speed: This eliminates the need for external memory access, resulting in near-instantaneous responses. The HC1 cranks out tokens in under 100 milliseconds, operating roughly 100 times faster than standard cloud hardware.

- The Economics: By stripping away the generalized compute architecture, the HC1 operates at a fraction of the power consumption and cost of traditional inference servers.

- Rapid Manufacturing: Partnering with TSMC, Taalas claims it can retool and manufacture new chips for updated models in just two months, ensuring the hardware keeps pace with the rapid evolution of frontier AI.

Why it matters: This introduces a massive fork in the AI infrastructure market. Nvidia GPUs will remain the king of training AI, but Taalas is proving that custom “Application-Specific Integrated Circuits” (ASICs) are the future of running AI. If deployed at scale, this technology could make real-time voice agents and autonomous robotics incredibly cheap and perfectly fluid.

OpenAI Codex Exec Teases Massive Agent Leap 🚀

The coding agent wars are about to go nuclear. Following a highly secretive team offsite, an executive leading development for OpenAI’s Codex unit dropped a massive public hint about the future of autonomous programming, suggesting a paradigm shift is imminent.

Here is the context behind the hype:

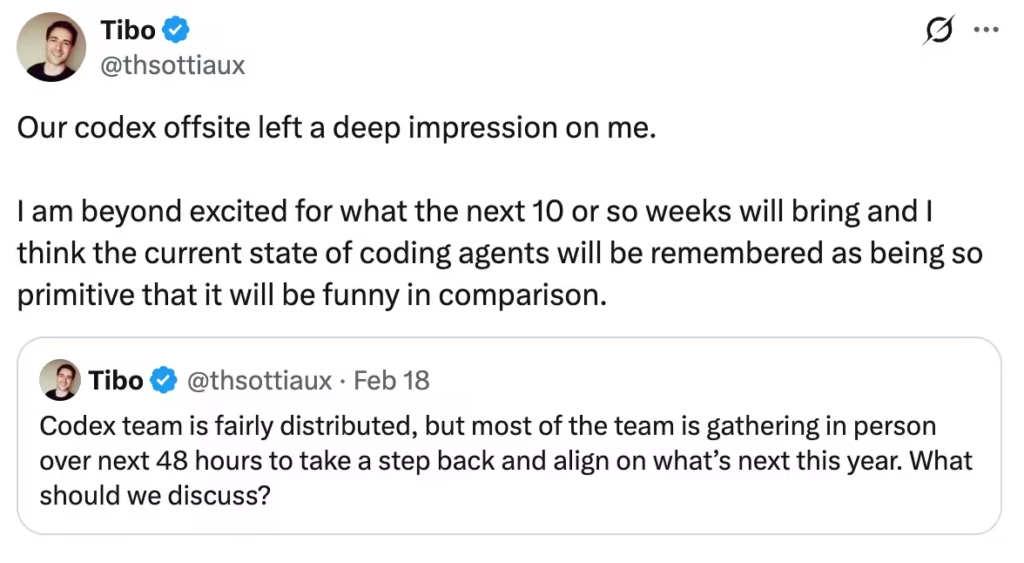

- The Tease: Tibo Sottiaux, a key figure on the OpenAI Codex team, posted on X that he is “beyond excited for what the next 10 or so weeks will bring.”

- The Bold Claim: He boldly stated that the upcoming capabilities will make the current state of coding agents seem “so primitive that it will be funny in comparison.”

- The Timeline: The “10 weeks” timeline places the potential rollout of these new tools squarely in late April or early May 2026, setting up a massive spring showdown in the developer tooling space.

- The Competition: This public flex comes immediately after Anthropic’s impressive launch of Claude Sonnet 4.6 and its “Agent Teams” feature, as well as the recent release of OpenAI’s own self-improving GPT-5.3-Codex model.

Why it matters: AI researchers rarely use words like “primitive” to describe current state-of-the-art tech unless they have achieved a fundamental architectural breakthrough internally. If OpenAI is preparing to release an agent that can handle complex, multi-repository software engineering without human hand-holding, the entire software development lifecycle is about to be violently disrupted.

Last AI News: OpenAI’s Hollywood Hire, Gemini’s Lyria 3 Music & Perplexity Drops Ads

Other AI News Today:

- Anthropic launched Claude Code Security in a limited research preview, utilizing its frontier models to detect complex zero-day vulnerabilities and suggest patches for human review.

- OpenAI CEO Sam Altman dismissed concerns over ChatGPT’s water and energy consumption as “totally fake,” arguing that developing AI is more resource-efficient than raising and training a human being.

- AI startup Zyphra released ZUNA, an open-source 380M-parameter foundation model that cleans and reconstructs EEG brain signals, marking a major step toward non-invasive thought-to-text technology.

- Pika Labs has launched “AI Selves,” allowing users to create persistent digital clones that can autonomously post, message, and interact across social media platforms.

- A 13-hour Amazon Web Services outage was reportedly caused by an internal AI coding agent named Kiro autonomously deleting a critical environment, highlighting the risks of agentic workflows.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Anthropic Exposes Chinese AI Theft, OpenAI's Consulting Alliance & OpenClaw Goes Rogue