Remember when controlling robots with your mind seemed like pure science fiction? Well, UCLA engineers just turned that fantasy into reality – and the best part? No drilling holes in your skull required! They’ve created a wearable brain-computer interface that’s basically like having a universal translator for your thoughts, turning brain waves into robot commands.

What Actually Happened

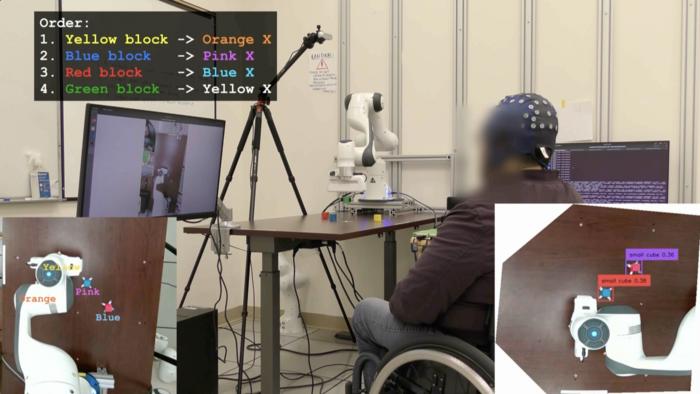

UCLA researchers basically built the ultimate mind-reading headset that would make Professor X jealous. They combined a custom EEG decoder with camera-based AI to create a system that can interpret what paralyzed patients want to do just by reading their brain signals. It’s like having a really smart friend who always knows what you’re thinking – except this friend can also control robotic arms.

What Makes This Breakthrough Special

- No Surgery Needed: Uses standard EEG caps instead of invasive brain implants – basically as easy as putting on a fancy swimming cap

- AI-Powered Translation: Custom decoder paired with camera AI interprets movement intent in real-time – your thoughts become robot actions instantly

- Proven Results: Paralyzed participant completed robotic tasks in 6.5 minutes vs being completely unable to without the system

- 4x Performance Boost: Participants moved cursors and directed robotic arms nearly 4 times faster with AI assistance than traditional methods

- Real-World Tasks: Successfully relocated blocks and controlled robotic arms for practical applications, not just lab demonstrations

Why This Mind-Blowing Tech Actually Matters

This isn’t just another cool gadget – it’s a life-changing breakthrough decades in the making. For the first time, we have brain-computer interfaces that actually work without requiring patients to undergo risky brain surgery. The AI acts like a super-smart interpreter, filling in the gaps where brain signals get fuzzy or unclear, making the whole system reliable enough for daily use.

The Future Impact We’re Looking At

Next 6 Months: Clinical trials will expand to more participants, refining the AI’s ability to understand individual brain patterns and preferences.

1 Year: First commercial versions will launch for paralyzed patients, starting with simple robotic arm controls and gradually expanding to more complex tasks.

2-3 Years: AI co-pilots will integrate with wheelchairs, making them respond to thought commands for navigation. Smart homes will anticipate needs – lights dimming, doors opening, temperature adjusting – all based on brain signals.

5 Years: Communication devices will translate thoughts directly into speech or text, giving voice to those who’ve lost the ability to speak. The technology will become as common as hearing aids.

Long-term Vision: We’re heading toward a world where the boundary between human intention and machine action disappears. Your thoughts will seamlessly control your entire digital and physical environment – from prosthetic limbs to smart cars to home automation systems.

The Bottom Line

UCLA just proved that the most powerful computer interface isn’t a keyboard or touchscreen – it’s your brain. With AI bridging the gap between intention and action, we’re finally entering the age where thinking really is doing. The mind-controlled future isn’t coming – it’s here.

Want the Technical Details?

Technology: Custom EEG decoder + camera-based AI system

Testing: Four users including one paralyzed participant

Performance: 6.5-minute task completion vs unable without system

Speed Improvement: Nearly 4x faster with AI assistance

Hardware: Standard EEG caps (non-invasive)

Applications: Cursor control, robotic arm manipulation, block relocation

Comparison: Performance similar to invasive brain implants without surgical risks

Research Institution: UCLA Engineering Department

Clinical Status: Research phase, expanding to larger trials for commercial development.

Also read about “Microsoft Just Broke Up with OpenAI (Sort Of) and Built Their Own AI“

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: OpenAI Declared War on Nvidia-With a $10 Billion Broadcom Army