Ilya Sutskever Says AI’s ‘Age of Scaling’ Is Ending 🧠

When Ilya Sutskever talks, the AI world listens – and he just dropped a bombshell! The co-founder of OpenAI and founder of SSI (Safe Superintelligence) appeared on the Dwarkesh Podcast to argue that the “age of scaling” (roughly 2020-2025) is officially over. He believes throwing more compute and data at current AI architectures won’t lead to true breakthroughs anymore.

Here’s the breakdown of his critique:

- The Shift: Sutskever says we’ve reached a ceiling where research and new idea – not brute-force scaling – will become the only things that drive meaningful progress.

- The ASI Forecast: He forecasts that true superhuman-like learning AI (ASI) could emerge within the next 5 to 20 years, but only if foundational research breakthroughs happen first.

- SSI’s Bet: His secretive startup, SSI, is betting big on this idea, calling itself an “age of research” company taking a “different technical approach” to superintelligence.

- Big Money Moves: He confirmed SSI is raising at a whopping $32 billion valuation and even declined an acquisition offer from Meta – proving his commitment to a research-first approach.

Why it matters: This is a powerful statement against the hundreds of billions currently being poured into building massive GPU clusters by almost every major tech company. Sutskever’s opinion carries immense weight, and his move suggests a potential split in the industry: labs focused on scaling vs. labs focused on discovery.

UrviumAI Take: Sutskever’s timing is awkward since everyone is buying chips. My suggestion to you, research the difference between “scaling laws” (the old era) and “sample-efficient learning” (the new research goal). Understanding this shift is key to knowing what the next generation of AI will look like.

Anthropic: AI Could Double U.S. Productivity Growth 👨🏻💻

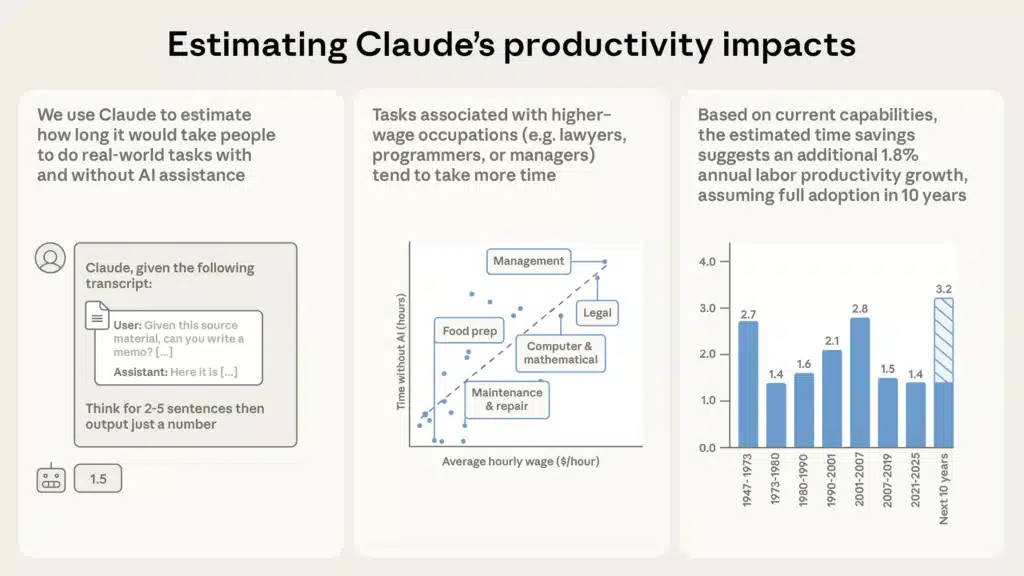

Good news for the economy, or maybe bad news for job security. Anthropic just published a huge piece of research analyzing 100,000 conversations with their Claude model to figure out exactly how much time AI is saving people right now. And their conclusion? AI could double the annual U.S. labor productivity growth rate!

Here’s what the data showed:

- Massive Time Cuts: Researchers found that for the average work task (which usually took about 90 minutes), using Claude cut the task completion time by roughly 80%. That’s a huge efficiency gain.

- Economic Impact: Extrapolating those real-world time savings across the U.S. economy, Anthropic estimates that widespread AI adoption could boost annual labor productivity growth by 1.8%– doubling the current growth rate seen since 2019.

- Biggest Winners: Software developers account for the largest share of the estimated gains (19%), followed by operations managers, marketing specialists, and customer service staff.

- Task Examples: Complex tasks saw the biggest time savings: curriculum development (96% time saved), research assistance (91%), and executive admin functions (87%).

Why it matters: This research moves the debate away from “will AI help?” to “how much, and how fast?” While the study is careful to sidestep the topic of job displacement (which Anthropic’s own CEO warns about), it proves with real-world data that current-gen AI models are delivering concrete, measurable, and profound economic efficiency right now.

UrviumAI Take: An 80% average time reduction is incredible, but it puts a spotlight on the ‘job displacement’ question. I think you should choose one of your most repetitive weekly tasks and try to complete or automate it using an AI (like Claude or Gemini) while strictly tracking your time. See if your personal efficiency gain matches Anthropic’s 80% average!

Tesla Says Its Next AI Chip Is Almost Ready 🤖

Forget Nvidia – Tesla is doubling down on custom silicon! Elon Musk’s company is nearing the final design phase for its next-generation AI chip, the AI5. This custom hardware is the powerful brain that will run all future Tesla products, from robotaxis to the Optimus humanoid robots.

Here’s the plan for AI5:

- Power Leap: The AI5 chip is set to deliver at least 5 times the compute power of Tesla’s current in-car hardware (AI4). This is essential for the huge data load of vision-only autonomous driving and complex robotics.

- Rapid Iteration: Musk says the company aims to launch one new AI chip design for mass production every year, pushing development faster than rivals.

- Dual Manufacturing: To secure massive volume and mitigate supply risk, the AI5 chip will be made by two giants: Samsung at its new Taylor, Texas, facility and TSMC at its Arizona plant.

- Timeline: The final “tape-out” (design sign-off) is imminent, with mass production expected by 2027. Work on the next chip, the AI6, is already underway.

Why it matters: Tesla, like Apple and Google, is investing heavily in vertical integration. By custom-designing chips specifically for their robots and cars, they are betting they can achieve a performance and efficiency edge that general-purpose chips from rivals like Nvidia simply can’t match.

UrviumAI Take: This dual-foundry strategy is a huge logistical challenge, but smart for risk mitigation. You should research the difference between “general-purpose” chips (like Nvidia’s) and “custom inference chips” (like Tesla’s). Understanding that difference will explain why custom hardware is so essential for the high-volume, real-time tasks of a robotaxi fleet.

Read Yesterday’s AI News: Claude Opus 4.5 Climbs Coding Ranks, ChatGPT’s New Shopping Guide, and U.S. AI Science Mission.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Karpathy: End AI Homework Detection, Harvard AI Finds New Disease Genes, and Brain-Gut Implant