DeepSeek’s New Models Rivaling GPT-5, Gemini-3 Pro 🐳

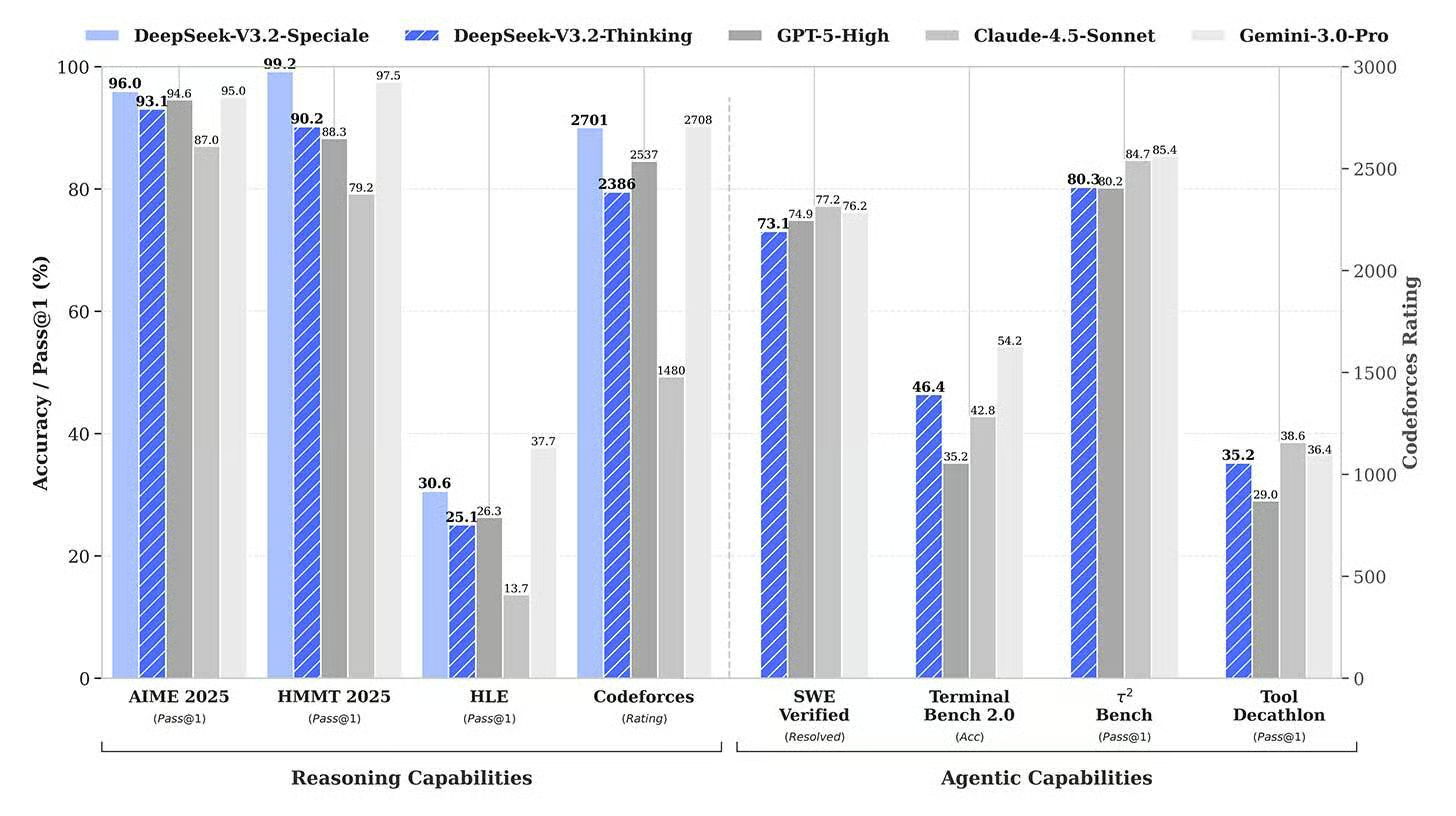

The Chinese AI competition is not messing around! DeepSeek, the rising star from China, just released two new reasoning models, V3.2 and V3.2-Speciale, that are performing on par with the absolute best models out there—think GPT-5 and Gemini 3 Pro. The best part? They are doing it at a fraction of the cost and releasing the models as open-source!

Here are the staggering facts:

- Top Tier Performance: The base model, V3.2, matches or nears the performance of rivals on math, tool use, and coding tests. The heavier Speciale variant even surpassed them in several areas, hitting gold-medal scores at the 2025 International Math and Informatics Olympiads.

- Massive Price Cut: DeepSeek is charging a tiny fraction of the cost of its rivals. Their V3.2 price is $0.28 input / $0.42 output per 1M tokens. Compare that to Gemini 3 Pro (around $2 / $12) or GPT-5.1 (around $1.25 / $10)!

- Open Access: Both 685-billion-parameter models are released under an MIT license, meaning anyone can download the weights and use them for free.

Why it matters: This launch confirms DeepSeek is not a one-hit wonder; it is a consistent frontier competitor. For the U.S. labs that charge huge premium fees for API access, the pressure to justify that price gap just got incredibly intense. Expect to see cost pressure ripple across the entire industry.

UrviumAI Take: The huge price difference is the main story here—it proves efficiency can beat brute force. If your company is currently using a premium API (like Gemini Pro or GPT-5.1) for tasks like coding or math, run a small test comparing the cost and accuracy of DeepSeek V3.2 on the same tasks. The savings could be massive!

Runway Tops Video Leaderboard with New 4.5 Release 🎥

In the battle for AI video supremacy, the small startup just beat the giants! Runway, the company that basically invented public AI video generation, just launched Gen-4.5, and it immediately climbed to the top of the Text-to-Video leaderboards.

Here’s why 4.5 is such a big win:

- The New King: Gen-4.5 (codenamed “Whisper Thunder”) secured the number one spot on the independent Artificial Analysis leaderboard, displacing Google’s Veo and OpenAI’s Sora.

- Cinematic Realism: Runway says the model’s outputs are often “indistinguishable from real-world footage.” It excels in cinematic realism, handling physics, fluid dynamics, and human movement much more naturally than before.

- Consistency: The key challenge in AI video—details like hair, fabric, and objects staying consistent across frames—is significantly improved, making the outputs professional-grade.

- David vs. Goliath: Co-founder Cristobal Valenzuela compared their victory over trillion-dollar companies to a “David vs. Goliath” moment, proving that focus can beat scale.

Why it matters: Runway is accelerating the timeline for Hollywood adoption. This new level of realism and natural motion control is the closest we’ve seen to cinematic quality needed for professional creative workflows. The year-over-year improvement in this space is truly mind-blowing!

UrviumAI Take: Runway’s win here proves that specialized focus can beat huge scale. Pay attention to the model’s ability to handle “fluid dynamics.” Watch the demo videos and see if liquids and flowing objects behave naturally. This is a crucial, high-difficulty test for any “world model” that claims to understand physics.

Kling’s All-in-One Video Model for Generation, Editing 📽️

December is definitely the month of AI video! Following Runway’s launch, Chinese startup Kuaishou (Kling AI) just released Kling O1, an all-in-one system designed to handle both video creation and detailed editing using a single model. This is the “Nano Banana” moment for video editing.

Here’s what Kling O1 can do:

- Unified System: O1 accepts up to seven inputs at once (images, videos, subjects, and text), allowing you to generate a clip and immediately edit it without switching tools.

- Edit Anything: Users can easily edit existing footage using simple text commands like “remove bystanders,” “shift to nighttime,” or “swap the character’s outfit” while keeping the original characters consistent.

- Smart Editing: The model handles granular edits without requiring manual masking or frame-by-frame work, making complex VFX-style edits possible with just a text prompt.

- Competition: Internal Kling tests show the O1 model winning against Google Veo and Runway’s older Aleph on video reference and editing tasks.

Why it matters: Kling O1 solves a major headache for creators: workflow fragmentation. By putting all generation, editing, and restyling capabilities into one unified model, Kuaishou is making high-quality, granular video editing accessible and fast, much like how advanced AI made complex image editing easy earlier this year.

UrviumAI Take: Kling O1’s unified editing capability is highly valuable. Focus on the “remove bystanders” feature. This kind of complex semantic editing (removing an object across multiple frames while maintaining the background consistency) is a fantastic metric for testing how deep the model’s understanding of the “world” truly is.

Last AI News: AI Solves 30-Year Math Problem, China Leads in Open AI, and DeepSeek Crushes IMO 2025

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: AI Carbon Footprint: Real Numbers by Model, Task & Country