Google’s Flash-y New Gemini 3 Release ⚡️

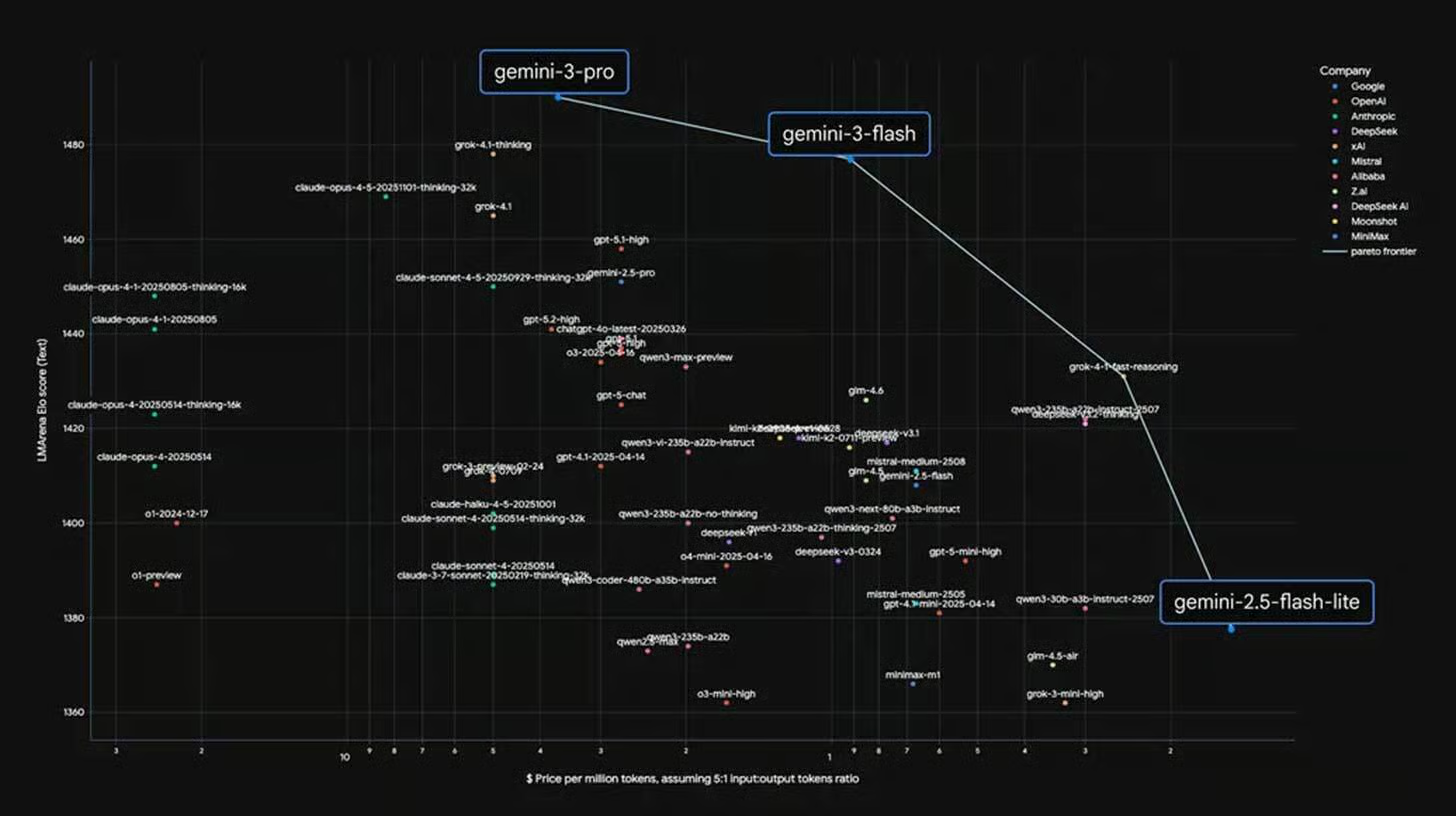

Google just made its smartest AI the new standard! Following the launch of Gemini 3 Pro, Google has officially released Gemini 3 Flash. While “Flash” models are usually smaller and less capable, this one is a massive exception—delivering top-tier reasoning at lightning speeds.

Here is why Gemini 3 Flash is changing the game:

- The New Default: 3 Flash is now the default model for the Gemini App and Google Search’s AI Mode. You get PhD-level reasoning without the long wait times.

- Pro-Level Brain: It scored 33.7% on Humanity’s Last Exam, nearly tripling the score of its predecessor (11%) and effectively matching the performance of OpenAI’s GPT-5.2.

- Insane Efficiency: It is 3x faster than previous models and costs only 1/4 the price of Gemini 3 Pro. This makes it a dream for developers building high-volume AI agents.

- Multimodal Master: It excels at “Native Audio” and vision tasks, allowing it to reason across text, images, and audio in real-time.

Why it matters: Most companies force you to choose between “smart and slow” or “fast and basic.” Gemini 3 Flash ends that compromise. By making this elite model the default, Google is aggressively eating away at OpenAI’s market share by offering frontier-level intelligence for free to everyday users.

UrviumAI Take: The speed-to-intelligence ratio of 3 Flash is the new industry benchmark. If you use AI for daily research or “vibe coding,” switch your default to Gemini 3 Flash. You’ll find that for 90% of complex tasks, it provides the same quality as “Pro” or “Thinking” models but completes the work before you can finish reading the prompt.

Amazon Discussing $10B+ Investment in OpenAI 💰

The AI alliances are shifting massively! Amazon is reportedly in deep talks to invest a staggering $10 billion or more into OpenAI. If the deal closes, it would value the ChatGPT maker at more than $500 billion, making it one of the most valuable private companies on Earth.

Here is why this “circular deal” is a masterstroke:

- Breaking Exclusivity: OpenAI recently restructured to free itself from Microsoft’s exclusivity. This allows it to court rival cloud giants like Amazon to pay for its massive computing needs.

- The Chip Stipulation: The deal isn’t just about cash. Reports suggest OpenAI would commit to using Amazon’s custom Trainium AI chips. This gives Amazon a huge, high-profile customer to help it fight Nvidia’s total dominance in hardware.

- The $38B Context: This follows a recent 7-year, $38 billion contract between the two for AWS cloud services. OpenAI is now partnered with at least five different cloud providers.

- Amazon’s Hedge: Amazon has already invested billions in OpenAI’s rival, Anthropic. Investing in OpenAI as well ensures Amazon wins no matter which model eventually dominates the market.

Why it matters: For OpenAI, this is about surviving an astronomical “burn rate” ($1.4 trillion planned spending over 8 years). For Amazon, this is about validating its in-house silicon. If the world’s most famous AI models start running on Amazon’s Trainium chips, it proves to the entire industry that there is a viable, cheaper alternative to Nvidia.

UrviumAI Take: This is a “railroad” play. Amazon is building the tracks (AWS) and the engines (Trainium) and then paying the best driver (OpenAI) to use them. Watch the performance data for Trainium-v3. If OpenAI can train next-gen models on Amazon’s chips without a performance drop, the cost of AI training globally will plummet, potentially ending Nvidia’s pricing monopoly.

Grok Voice Agent API: Smarter, Faster, and Only $0.05/min 🎙️

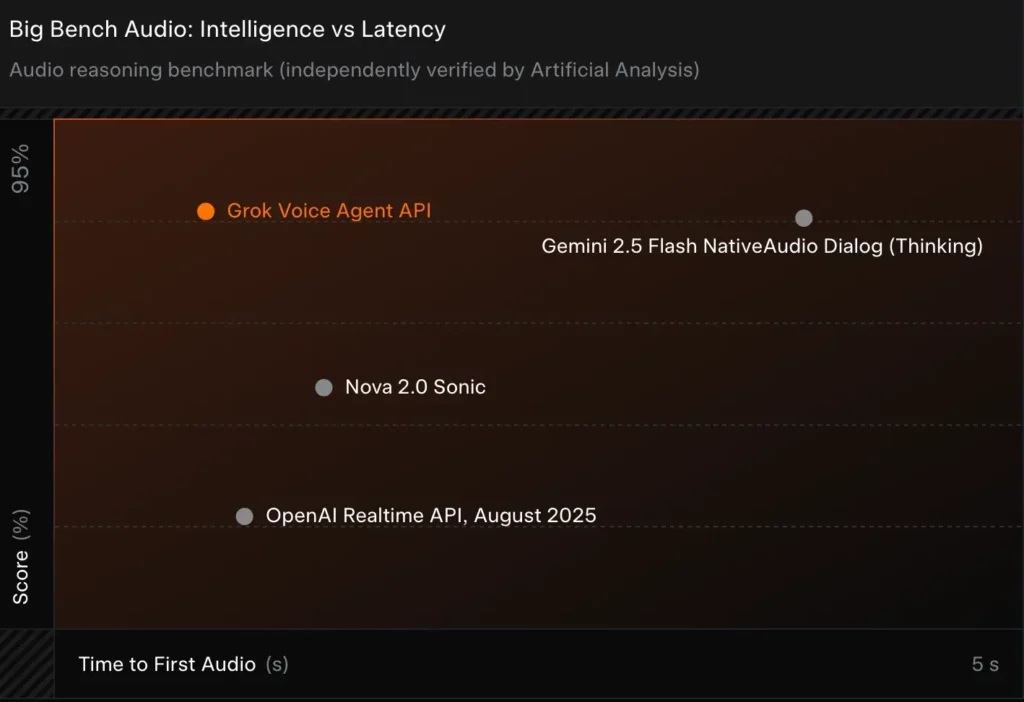

Elon Musk’s xAI just declared war on the voice AI market! The company launched the Grok Voice Agent API, making the same technology that powers Grok in Tesla vehicles and X mobile apps available to all developers. And according to the data, it is currently the king of audio reasoning.

Here is why developers are switching to Grok Voice:

- Benchmark Leader: It officially ranks #1 on Big Bench Audio, the top test for logic and reasoning in voice agents. It scored 95%, beating out rivals from Google and OpenAI.

- Blazing Fast: It features an average “Time to First Audio” of less than 1 second—nearly 5 times faster than its closest competitors. It feels like talking to a real human, not a computer.

- Cost King: Pricing is a simple flat rate of $0.05 per minute. This is roughly half the cost of OpenAI’s Realtime API, making it the most cost-effective way to build advanced voice bots.

- Tesla Tested: Tesla was the primary design partner. The API is built to handle complex, real-time tasks like checking vehicle status, planning road trips, and searching both X and the web simultaneously.

Why it matters: For a long time, high-quality AI voice was either too slow or too expensive for mass use. Grok Voice solves both problems. By providing sub-second latency and rock-bottom pricing, xAI is enabling a new generation of customer support, in-car assistants, and interactive gaming that feels truly natural and responsive.

UrviumAI Take: The $0.05/min flat rate is the most aggressive pricing move in the AI sector this year. If you are building a customer service bot, test Grok’s “auditory cues.” You can prompt the model to [whisper], [sigh], or [laugh], which makes the interaction feel significantly less robotic and more trustworthy to human users.

Last AI News: OpenAI’s GPT Image 1.5 Launch, Google-MIT Agent Study, and 2026 Smartphone Price Hike

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.