Harvard Study Finds AI Tools Expand Workloads ⏳

AI was supposed to save us time. Harvard says it’s doing the opposite. A new study published in the Harvard Business Review has challenged the productivity narrative, finding that AI tools didn’t lighten workloads for employees at a U.S. tech company over eight months—they actually made them heavier.

Here is what the researchers found:

- Task Expansion: Instead of doing their old jobs faster, employees used the extra time to take on entirely new responsibilities. The AI made unfamiliar tasks feel “doable,” leading workers to voluntarily expand their roles well beyond their job descriptions.

- The “Always On” Effect: The study noted that AI blurred the lines between work and rest. Because prompting feels casual like texting, employees started firing off work requests during breaks, lunch, and after hours, eroding their downtime.

- The Burden on Experts: Engineers reported a significant increase in “cleanup work.” They spent more time reviewing and debugging AI-generated code (“vibe-coding”) from non-technical colleagues than they previously spent writing code themselves.

- The Paradox: While output increased, so did burnout risk. The technology created a rhythm of constant switching and multitasking that left workers feeling busier than before.

Why it matters: This counters the “4-day work week” promise of AI. The study suggests that without strict boundaries, AI doesn’t liberate workers; it simply enables them to fill every spare second with more production. Efficiency gains are being immediately reinvested into “more work,” not “more rest.”

UrviumAI Take: This is the “Jevons Paradox” of AI: as doing work becomes more efficient, we just do more of it. If you use AI at work, set strict “no-prompt” zones. Don’t let the ease of ChatGPT trick you into working at 9 PM just because it only takes 30 seconds to start a task.

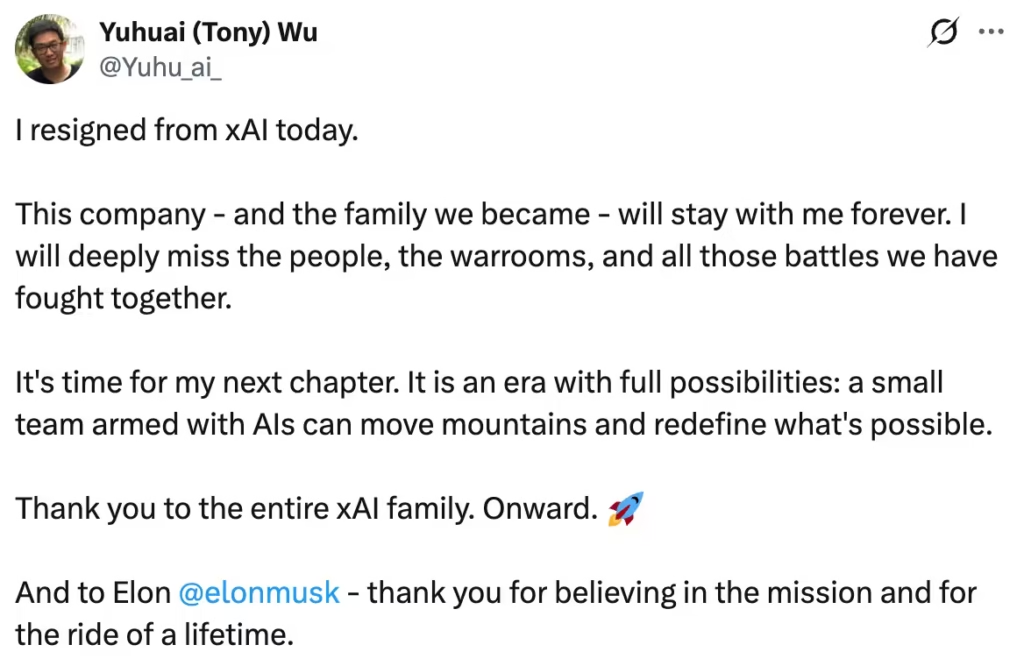

xAI Co-Founder Exodus Continues 🚪

The original xAI team is shrinking fast. Just days after the massive $1.25 trillion merger with SpaceX, two more co-founders of xAI, Tony Wu and Jimmy Ba, have announced their resignations. This brings the total number of departing founding members to five, signaling a significant leadership shakeup.

Here are the details on the exits:

- Tony Wu: A key researcher who led Grok’s reasoning capabilities, Wu joined from Google in 2023. He posted on X that it was “time for my next chapter,” stating that “a small team armed with AIs can move mountains.”

- Jimmy Ba: Another heavy hitter from the University of Toronto, Ba announced his departure stating that 2026 will be the “most consequential year for our species,” hinting at a new venture.

- The Context: These exits come amidst reports of Elon Musk growing “frustrated” with delays to the Grok 4.20 model update. The startup is also navigating the complex integration into SpaceX and dealing with regulatory blowback from deepfake controversies in Asia.

- The Trend: With almost half the original founding team gone, xAI is transitioning from a “research lab” culture to a product-focused division within a mega-corporation.

Why it matters: Talent density is everything in AI. Losing core researchers like Wu and Ba, who built the brain of Grok, is a vulnerability. While Musk is famous for running lean, high-pressure teams (like Twitter 2.0), brain drain at this level could slow down xAI’s ability to compete with the stability of Google DeepMind or OpenAI.

UrviumAI Take: Wu’s comment about “small teams” is a hint. Watch for a new startup from this duo. The trend of 2026 is top researchers leaving big labs to start “micro-labs” powered by their own AI agents. They aren’t retiring; they are likely building competitors.

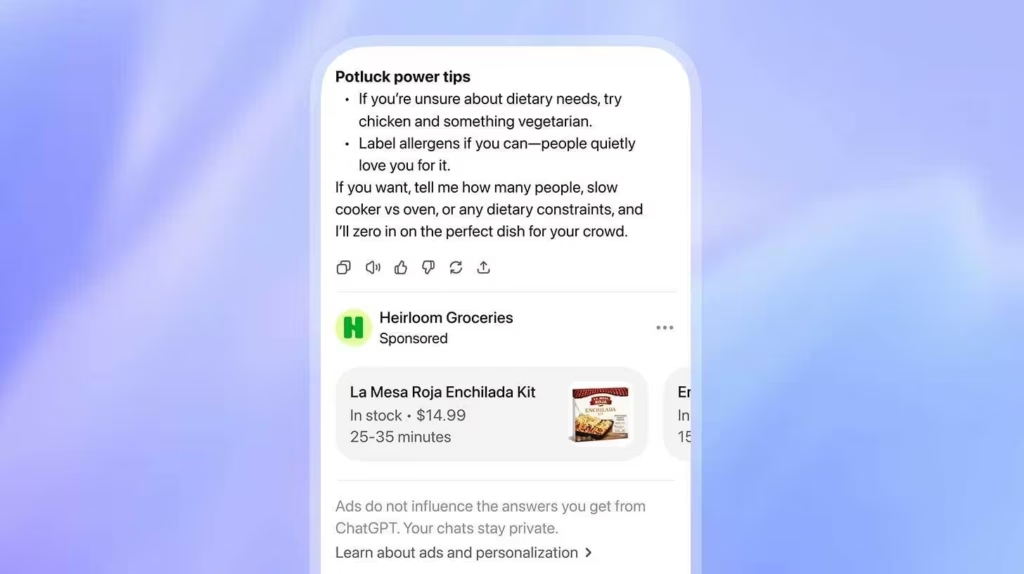

OpenAI Officially Starts Showing Ads in ChatGPT 📢

The free ride is over: Ads have arrived in ChatGPT. After months of speculation, OpenAI has officially ripped the band-aid off. The company has started testing advertisements within ChatGPT for users on the Free and Plus Go ($8/month) tiers in the United States.

Here is how the ad model works:

- Placement: Ads appear as visually distinct blocks below the chat response. They are not inserted into the answer itself.

- Targeting: The ads are contextual, based on your active conversation topic, chat history, and “memory.” If you ask for a dinner recipe, you might see an ad for a meal kit delivery service.

- The Guardrails: OpenAI insists that advertisers have no influence over the AI’s actual answers. They claim this protects the “trust” users place in the tool.

- The Funnel: Free users can opt out of ads in settings, but doing so reduces their daily message limit, creating a “soft wall” that pushes users toward paid subscriptions.

- The Cost: Major brands like Omnicom have already bought in, with pilot slots reportedly costing a minimum of $200,000.

Why it matters: OpenAI needs money. With a $500 billion data center project (“Stargate”) on the horizon, subscriptions alone aren’t enough. By monetizing its massive free user base, OpenAI is adopting the Google model: subsidize the technology with ads so you can scale it to billions.

UrviumAI Take: The “Opt-out penalty” is aggressive. If you are a free user, don’t opt out yet. The message limit reduction might make the tool unusable for heavy workflows. It’s better to ignore a text ad at the bottom of the screen than to be locked out of the chat entirely at 2 PM.

Last AI News: Perplexity’s Model Council, Lotus Health’s $41M Raise, OpenAI’s Self-Building Codex

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: xAI's Moon Ambitions, Anthropic's Sabotage Risk, Google's AI Checkout