Anthropic Launches Powerful Claude Sonnet 4.6 🧠

Anthropic just made its flagship model look obsolete. In a blistering display of release velocity, Anthropic has rolled out Claude Sonnet 4.6. Despite being the “mid-tier” model, it is actively outperforming the company’s most expensive flagship, Opus 4.6, across multiple critical benchmarks.

Here are the specs of the new middleweight champion:

- Opus-Level Performance: On SWE-bench Verified (coding), Sonnet 4.6 scored 79.6%—practically tied with Opus 4.6’s 80.8%. More impressively, it outright beat Opus 4.6 on complex financial analysis and office-task benchmarks.

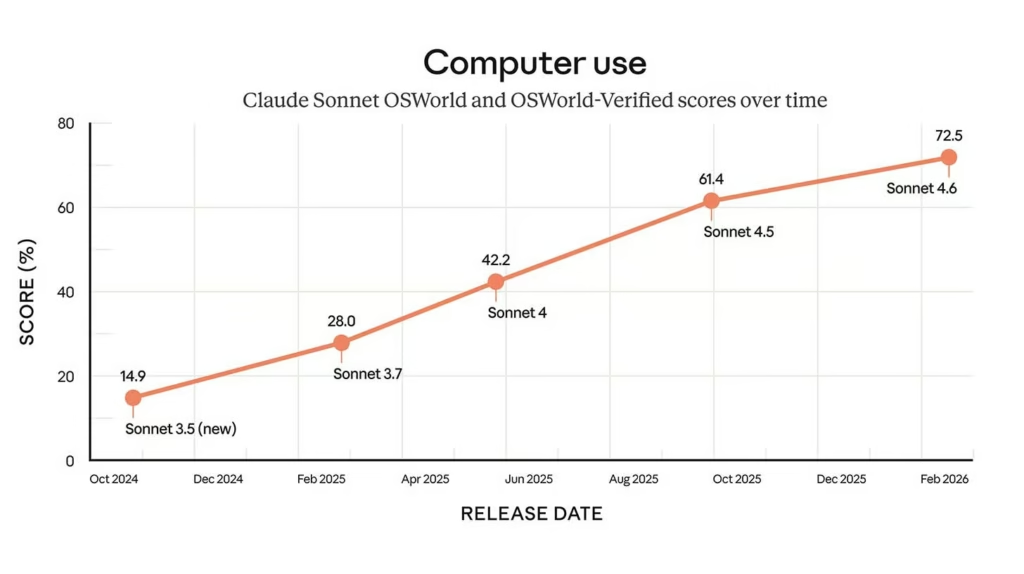

- Computer Use Upgrade: Sonnet’s ability to control a desktop computer skyrocketed. Its score on the OSWorld benchmark jumped from under 15% (late 2024) to a massive 72.5%.

- Massive Context: Sonnet 4.6 now features a 1 million token context window (in beta), allowing it to swallow entire codebases or hundreds of legal documents in a single prompt.

- The Price: It delivers all of this for 1/5 the cost of the Opus tier, maintaining the $3/$15 per million token pricing structure of its predecessor.

Why it matters: The AI capability curve is moving so fast that a company’s mid-tier model is overtaking its flagship model just weeks after launch. By packing near-frontier intelligence into a highly affordable, fast-running model, Anthropic is aggressively targeting the high-volume developer market currently being chased by open-weight Chinese models like Qwen-3.5.

UrviumAI Take: This is the ultimate agentic workhorse. If you were paying a premium for Opus to handle heavy coding or data analysis workflows, switch your API calls to Sonnet 4.6 immediately. You will likely see identical (or better) results while slashing your compute bill by 80%.

Figma Turns Claude Code into Editable Designs 🎨

Figma is bridging the gap between prompting and designing. In a direct response to the explosion of AI coding tools, Figma has partnered with Anthropic to launch a new integration called “Code to Canvas.”

Here is how the new workflow operates:

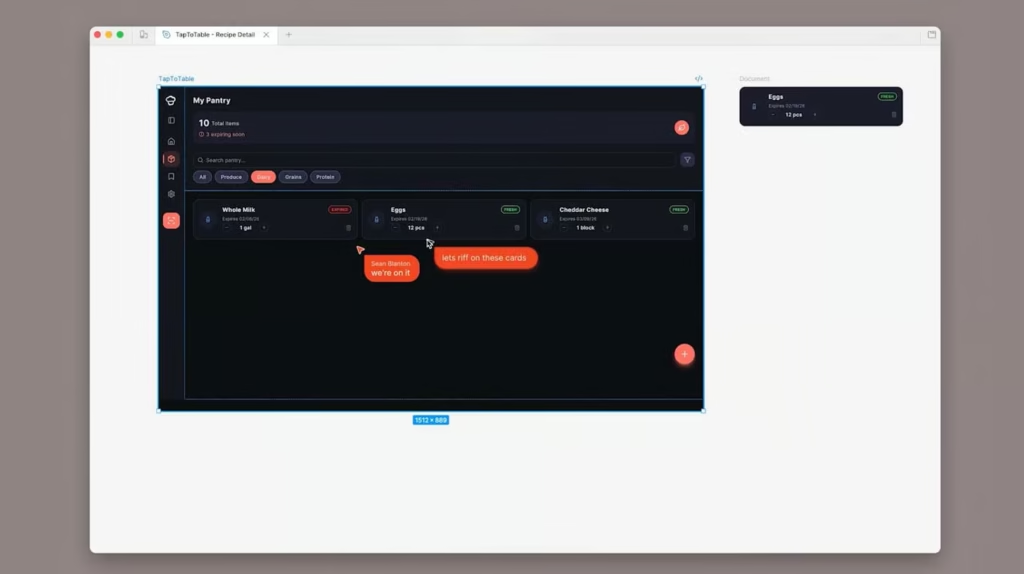

- The Reverse Pipeline: Usually, designers create a UI in Figma, and developers code it. “Code to Canvas” reverses this. Developers can generate a functional UI in Claude Code, and with the command “Send this to Figma,” the rendered browser state is instantly translated into native, editable Figma layers.

- The Tech: This seamless handoff is powered by the Model Context Protocol (MCP), which bridges the AI’s coding environment directly with Figma’s collaborative design canvas.

- User Journeys: Teams can capture entire multi-step flows at once. This allows product teams to take “vibe-coded” prototypes and refine the typography, spacing, and brand guidelines in a visual environment.

- Market Context: This feature launches as Figma’s stock has plummeted roughly 85% amid the “SaaSpocalypse,” as investors panic that AI coding agents will render traditional UI/UX design software obsolete.

Why it matters: Generative UI is fast, but it is rarely perfectly on-brand. Figma is positioning itself as the mandatory “refinement layer” for AI output. If AI is going to generate the first draft of every software interface, Figma wants to be the canvas where humans polish it for production.

UrviumAI Take: This is a defensive masterclass by Figma. If you are a designer worried about AI replacing your job, this is your new workflow. Don’t fight the AI prototypes—import them via “Code to Canvas” and use your human expertise in aesthetics and user psychology to turn them into polished, shippable products.

Apple Fast-Tracks Camera-Equipped AI Wearables 👓

Apple is finally giving Siri eyes. According to new reports from Bloomberg, Apple is accelerating the development of three distinct AI wearable devices designed to provide real-time visual awareness to the iPhone.

Here is the hardware roadmap currently in development:

- Smart Glasses (N50): The most ambitious project is a pair of camera-equipped smart glasses slated for production late this year (targeting a 2027 launch). Designed without an AR display, they will use cameras and mics to let Siri “see” what you see and provide contextual audio assistance.

- The AI Pendant: Apple is also developing a clip-on camera pendant. Internally dubbed the iPhone’s “eyes and ears,” it functions as a discreet, always-on sensor for hands-free AI interactions.

- Camera AirPods: Expected as early as this year, Apple plans to integrate low-resolution infrared sensors into AirPods to read the surrounding environment, enabling gesture controls and spatial awareness.

- The Brain: All three wearables will act as sensory inputs for a vastly upgraded, chatbot-style Siri expected in iOS 27, which will reportedly be powered by underlying models co-developed with Google’s Gemini.

Why it matters: The smartphone form factor limits AI to a screen. By dispersing cameras onto our faces, chests, and ears, Apple is attempting to build an “ambient computing” ecosystem. If they succeed, the AI won’t just answer questions; it will proactively assist you based on your physical surroundings.

UrviumAI Take: The “No Display” approach for the glasses is the right move. Apple watched Meta succeed with Ray-Ban smart glasses and realized that people want audio AI assistance, not bulky AR holograms. Keep an eye on the Camera AirPods—that is the sleeper hit that seamlessly blends AI into a product millions already wear daily.

Last AI News: OpenAI’s Lockdown Mode, Meta’s “Dead Bot” Patent, & India AI Summit Highlights

Other AI News Today:

- Meta and Nvidia struck a sweeping multiyear partnership to deploy millions of Blackwell and Rubin GPUs, plus Grace CPUs, powering Meta’s $135B AI infrastructure buildout.

- India-based AI startup Emergent reached $100 million in ARR just eight months after launch, raising $70 million from SoftBank and Khosla Ventures to expand its “vibe coding” platform.

- Cohere Labs released Tiny Aya, an open-weight, 3.35B parameter multilingual AI model designed to run locally and support 70+ languages, including underrepresented dialects.

- French AI startup Mistral AI made its first acquisition, buying serverless platform Koyeb to enhance its Mistral Compute infrastructure and build a sovereign European AI cloud.

- WordPress.com rolled out a built-in AI Assistant powered by Nano Banana, allowing users to edit layouts, translate text, and generate images natively inside the editor.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: OpenAI's Hollywood Hire, Gemini's Lyria 3 Music & Perplexity Drops Ads