Reddit Outlines Plan to Crack Down on AI Bots 🤖

The “Dead Internet Theory” is forcing social media platforms to rethink how they manage users. Reddit CEO Steve Huffman has officially outlined a new plan to separate genuine human users from the rapidly growing flood of automated AI accounts.

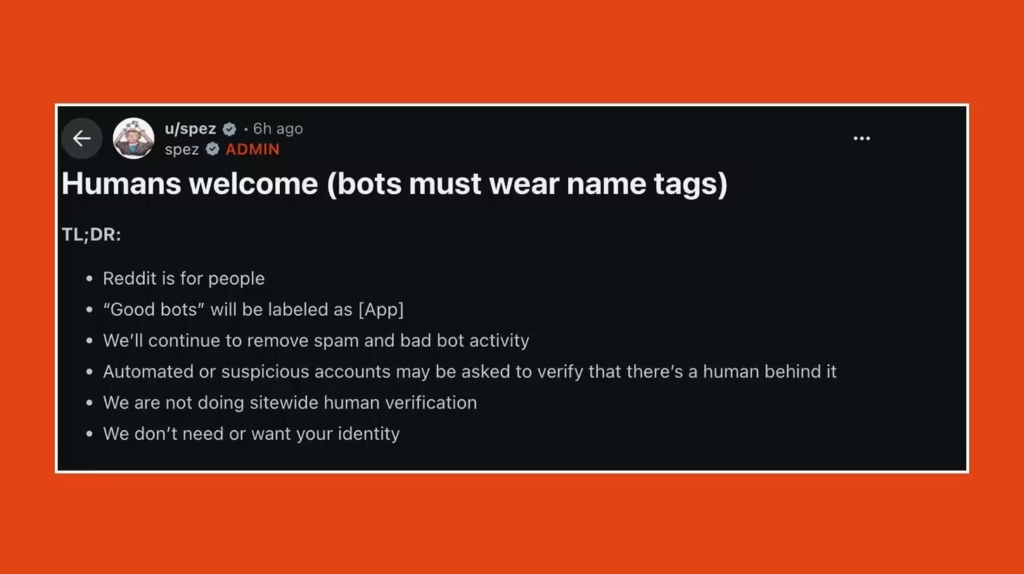

Here is how Reddit plans to manage the bot influx:

- The [App] Label: Accounts running automation in approved ways will now carry a mandatory “[App]” label, clearly identifying them as non-human to the community.

- Optional Verification: Instead of forcing every user to upload a government ID, Reddit will rely on passkeys or Sam Altman’s World ID scanner to confirm proof of humanity when an account exhibits suspicious behavior. Government IDs will remain a last resort.

- Community Policing: Huffman stated that AI-written content is not being outright banned. Instead, individual subreddits will be allowed to set and enforce their own rules regarding AI-generated posts.

- The Threat: The move comes shortly after rival platform Digg folded due to bot overrun, and as Cloudflare data projects automated internet traffic will surpass human traffic by 2027.

Why it matters: Reddit is attempting a delicate balancing act. They need to stop malicious bots from destroying the platform’s authenticity, but they know that forcing millions of anonymous users to upload a driver’s license will kill the site’s core culture. This decentralized, label-heavy approach is a critical test case for how the internet will survive the coming wave of autonomous AI agents.

UrviumAI Take: Anonymity is becoming a luxury of the past. If you run a digital community or a forum, start implementing “Proof of Human” integrations (like World ID or secure passkeys) now. Do not wait until your platform is overrun by AI spam. The platforms that survive the next five years will be the ones that guarantee users they are talking to real people, not a swarm of LLMs talking to each other.

ARC-AGI-3 Benchmark Stumps Every Frontier AI Model 📉

The true test of artificial general intelligence just got significantly harder. François Chollet’s ARC Prize Foundation has officially released ARC-AGI-3, the newest version of its highly respected interactive reasoning benchmark, and the results for current AI models are dismal.

Here is why the new benchmark is turning heads across the industry:

- The Reset: While top models had brute-forced their way to nearly 50% accuracy on the older ARC-AGI-2 test, the new V3 challenge completely stumped them, with not a single model scoring even 1%.

- The Leaderboard: Google’s Gemini Pro scored the highest among all frontier models at a staggering 0.37%, followed closely by GPT 5.4 High (0.26%), Opus 4.6 (0.25%), and Grok-4.20 (0%).

- The Human Contrast: The tasks involve game-like scenarios with zero instructions. The benchmark tests the ability to discover rules, form goals, and plan strategies from scratch, tasks that humans can solve with 100% accuracy on the very first try.

- The Prize: The challenge is backed by a $1 million prize, and frontier labs are reportedly treating V3 with intense focus as they attempt to prove their models possess genuine reasoning rather than just pattern memorization.

Why it matters: The ARC challenge is the ultimate reality check for the AI industry. While models can write perfect code and pass the bar exam, ARC-AGI-3 proves they still lack the fundamental, intuitive reasoning capabilities of a human child. The speed at which labs recover from this <1% reset will reveal whether they are actually building reasoning engines, or just spending billions to brute-force the test.

UrviumAI Take: Memorization is not reasoning. Don’t get blinded by the hype when an AI passes a standardized test. Current LLMs are incredibly good at regurgitating patterns they have seen in their training data, but they fail spectacularly when presented with a novel, logic-based problem they haven’t seen before. Understand this limitation before trusting an AI to make autonomous, high-stakes decisions in your business.

Google’s TurboQuant Algorithm Shrinks AI Memory 🧠

A mathematical breakthrough at Google just sent shockwaves through the hardware sector. Google Research has introduced “TurboQuant,” a revolutionary new algorithm that drastically compresses the memory required to run large AI models without losing any accuracy.

Here is the technical breakdown of the memory breakthrough:

- The Bottleneck: As AI conversations get longer, the model’s “working memory” (KV cache) balloons in size, requiring massive amounts of RAM, slowing down responses, and driving up costs.

- The Compression: TurboQuant uses a novel two-stage geometric process to shrink this cache storage by over 6x. Crucially, this compression requires no retraining of the model.

- Zero Accuracy Loss: In tests that buried key details in massive amounts of text (“needle in a haystack”), the compressed models scored perfectly, maintaining 100% accuracy.

- The Speed Boost: On top-tier Nvidia H100 chips, the algorithm actually sped up response processing by up to 8x compared to standard methods.

- The Market Reaction: Because this software breakthrough could drastically reduce the amount of physical RAM hardware needed to run AI, shares of top memory chip companies dropped 3-5% following the announcement.

Why it matters: For the last two years, the AI industry’s solution to everything has been “buy more hardware.” TurboQuant proves that algorithmic efficiency is the new frontier. If Google can deploy this across production systems, the cost of running massive AI models will plummet, threatening the premium hardware sales of memory manufacturers while making complex AI wildly cheaper for end users.

UrviumAI Take: Software optimization is about to crush hardware monopolies. Do not lock your enterprise into massive, long-term hardware leasing contracts right now. Breakthroughs like TurboQuant prove that the computing requirements to run frontier AI models are going to drop drastically over the next 12 months. The models are getting smarter, but the math running them is getting lighter.

Last AI News: Figma’s AI Canvas, Roche’s GPU Factory & OpenAI Foundation’s $1B Pledge

Other AI News Today:

- Amazon acquired two robotics startups, Rivr and Fauna Robotics, signaling a massive push to expand automation from warehouses into last-mile delivery and the home.

- AI note-taking startup Granola is raising $125 million at a $1.5 billion valuation, planning to add agentic features that take action on user notes.

- Google upgraded its music AI model with the release of Lyria 3 Pro, allowing users to generate full 3-minute songs with structured intros, verses, and choruses.

- Bret Taylor’s startup Sierra launched Ghostwriter, an AI agent that builds other conversational AI agents across voice and chat without manual coding.

- Legal tech startup Harvey raised $200 million in a new funding round co-led by Sequoia, skyrocketing its valuation to $11 billion.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Anthropic's Leaked Mythos Model, Meta's TRIBE v2 & Anthropic Wins Injunction - UrviumAI