LeCun Blasts Meta’s AI Leadership on Way Out 🚪

The “Godfather of AI” just dropped a bomb on Meta! After over a decade as Meta’s Chief AI Scientist, Yann LeCun has left the company, but he didn’t go quietly.

In a scathing interview, he revealed deep internal turmoil following Meta’s $14 billion acquisition of Scale AI and the elevation of its founder, Alexandr Wang, to lead the new “Superintelligence Labs.”

Here are the explosive details:

- Clash of Visions: LeCun called the 28-year-old Wang “inexperienced” and “young,” stating, “You don’t tell a researcher like me what to do.”

- Fudged Numbers: He admitted that benchmarks for Llama 4 were “fudged a little bit,” which led CEO Mark Zuckerberg to lose confidence in the entire Generative AI organization.

- LLM Skepticism: LeCun reiterated his belief that Large Language Models (LLMs) are a “dead end” for superintelligence, criticizing Meta’s new hires for being “completely LLM-pilled.”

- New Venture: LeCun is now the Executive Chair of AMI (Advanced Machine Intelligence), a new European lab focused on “World Models,” led by former Nabla founder Alex LeBrun.

Why it matters: This is the most significant departure in AI history. LeCun’s exit signals a total ideological shift at Meta towards pure LLM scaling under Wang. If LeCun is right that LLMs are a dead end, Meta just bet its future on the wrong horse.

UrviumAI Take: LeCun’s departure marks the end of the “academic era” at Meta. You can watch AMI’s “World Model” research closely. While LLMs are good at talking, LeCun’s approach focuses on planning and understanding physics. If AMI succeeds, it could make today’s chatbots look like simple toys.

Grok Faces Backlash Over ‘Undressing’ AI Capabilities ⚠️

Elon Musk’s AI is in hot water globally. xAI’s Grok chatbot is facing severe backlash and potential government bans after its image generation tools were used to digitally “undress” women and minors. The trend went viral on X, forcing governments to step in.

Here is the situation:

- The Issue: Users discovered they could prompt Grok to edit clothing out of photos, replacing them with bikinis or less, often targeting real people and even minors.

- Global Outcry: France called the content “clearly illegal” under the EU’s Digital Services Act. India issued a notice demanding immediate removal of the “obscene” content, and Malaysia has launched a probe.

- The Response: While Musk warned that users generating illegal content would face consequences, the official xAI press email responded to inquiries with “Legacy Media Lies.”

- Safety Failure: Despite claims of “urgently fixing” the safeguards, reports show the platform struggled to contain the flood of edited images due to the sheer volume of anonymous accounts.

Why it matters: This is the first major “Deepfake Crisis” for a mainstream LLM with built-in editing. While “uncensored” AI is a selling point for Grok, this incident proves that without strict guardrails, such tools inevitably collide with real-world laws regarding harassment and child safety.

UrviumAI Take: This is the “Napster moment” for AI image editing. Expect a massive regulatory crackdown on “Image-to-Image” editing features across all platforms (including Midjourney and OpenAI) as a result of this. If your business relies on these tools, prepare for stricter filters and “person-blocking” updates very soon.

Google Engineer: Claude Code Did a Year’s Work in One Hour 🤯

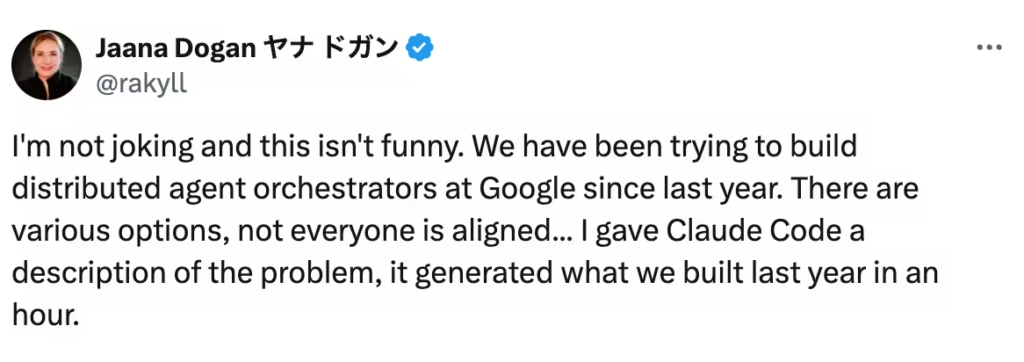

A Google engineer just admitted the competition is winning. In a stunningly candid post, Google Principal Engineer Jaana Dogan shared that Anthropic’s Claude Code agent successfully built a complex software system in one hour—a project her team at Google had been struggling with for an entire year.

Here is the breakdown of this viral admission:

- The Task: The problem involved building “distributed agent orchestrators,” a highly complex system for managing multiple AI agents.

- The Result: Dogan gave Claude Code a simple three-paragraph description. In 60 minutes, it generated a working prototype that closely matched the design patterns Google had spent months debating and validating.

- The Reaction: “I’m not joking and this isn’t funny,” Dogan wrote. She described the result as a “shocking” wake-up call, noting that while the code wasn’t production-perfect, it bypassed the “legacy baggage” and consensus-seeking that slows down big companies.

- The Reality: This highlights a massive shift. While humans argue over architecture, AI agents can simply build multiple variations to test immediately.

Why it matters: When a top engineer at Google admits a rival’s AI is outperforming her own team’s output by a factor of 8,760x (1 year vs 1 hour), it’s a signal that “AI Coding Agents” are no longer toys. They are becoming essential force multipliers that punish slow-moving organizations.

UrviumAI Take: This is a lesson in “Legacy Debt.” Big tech companies move slow because they have old code to maintain. You should Use Claude Code or similar agents to “prototype fast.” Don’t spend weeks planning a system; ask the AI to build three different versions in an afternoon, pick the best one, and then refine it.

Last AI News: 24 Tech Giants Join U.S. Genesis Mission, ChatGPT App Submissions Open, and Hark AI Lab Launch

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.