Brin Mobilizes DeepMind to Chase Anthropic on Code 💻

The race for AI dominance has triggered an all-hands-on-deck response at the highest levels of Google. Co-founder Sergey Brin is personally leading a new “strike team” within DeepMind, explicitly tasked with closing the coding capability gap between Gemini and Anthropic’s Claude models.

Here are the details behind Google’s internal programming push:

- The Leadership: Brin is rallying the group alongside CTO Koray Kavukcuoglu and research engineer Sebastian Borgeaud, who previously led DeepMind’s pretraining efforts.

- The Motivation: Internal evaluations at DeepMind reportedly rate Claude’s code-writing abilities significantly higher than Gemini’s. Brin pitched the new initiative to staff by emphasizing that elite coding capability is the shortest and most critical route to achieving self-improving AI systems.

- Internal Mandates: Gemini engineers are now required to utilize Google’s internal agent tools for complex tasks, with their usage actively tracked on a company-wide leaderboard known as Jetski.

- The Goal: The primary objective isn’t just a consumer product response; it is to automate Google’s internal software engineering workflows and close the operational gap with the deeply embedded AI systems utilized by OpenAI and Anthropic.

Why it matters: Coding is no longer just a feature; it is the central battleground for Artificial General Intelligence (AGI). When a retired founder like Sergey Brin returns to personally lead an engineering strike team, it signals that Google views its current programming deficit as an existential threat. The tech giant realizes that the first company to build an AI that can flawlessly code and train the next generation of AI will win the entire race.

UrviumAI Take: Coding is the bridge to autonomous self-improvement. Stop thinking of AI coding assistants as just tools to help human developers type faster. The frontier labs are racing to build models that can rewrite their own underlying architecture. If your enterprise software strategy doesn’t heavily involve using AI agents to autonomously manage, refactor, and deploy your codebases today, you will be structurally unable to compete when these self-improving systems reach the public market.

Adobe’s New Agentic AI Platform for Enterprises 🎨

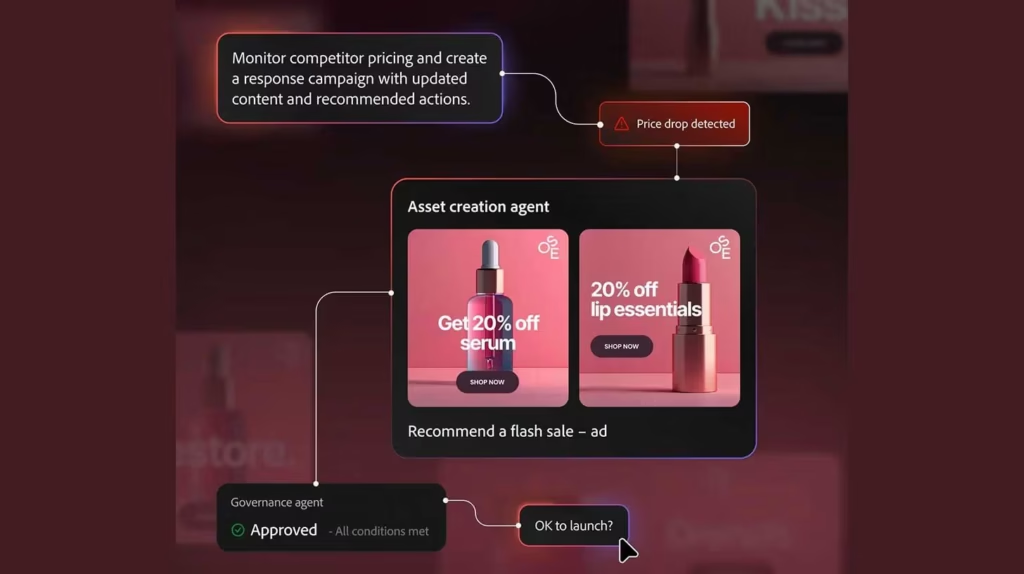

The orchestration layer of the digital marketing industry is undergoing a massive automated upgrade. Adobe has officially introduced “CX Enterprise” at its Adobe Summit, launching a comprehensive agentic AI platform built specifically for enterprise coordination.

Here is how Adobe is weaving AI agents into the modern enterprise workflow:

- The Orchestration Layer: CX Enterprise unites three critical business pillars under a single agentic umbrella: brand visibility, content supply chain, and customer engagement.

- Agentic Execution: The new CX Enterprise Coworker acts as a central manager, assessing a specific user goal, assembling the correct specialized agents, creating a tactical plan, and executing multi-step actions autonomously.

- Model Interoperability: Adobe’s Marketing Agent is highly agnostic, plugging directly into third-party foundation models like ChatGPT, Claude, Gemini, and Copilot to coordinate tasks natively between external agents and internal Adobe applications.

- Custom Workflows: Adobe also launched a dedicated agent skills catalog, empowering enterprise teams to build, customize, and reuse their own automated workflows within the platform.

Why it matters: Legacy software platforms are facing an existential threat from native foundation models. If Claude or ChatGPT can autonomously design a marketing campaign and push it live, users don’t need traditional software. Adobe is aggressively defending its turf by positioning itself as the ultimate “middleman.” By building a platform that orchestrates all the different AI models and grounds them in a company’s specific brand data, Adobe ensures it remains the indispensable command center for enterprise creative teams.

UrviumAI Take: Interoperability is the ultimate defense against model lock-in. Do not force your creative and marketing teams to commit exclusively to one frontier model. The strength of Adobe’s new CX Enterprise lies in its ability to route tasks between ChatGPT, Claude, and Gemini depending on the goal. Build your marketing architecture around an agnostic orchestration layer so you can seamlessly swap in the best-performing AI agents without disrupting your company’s core content supply chain.

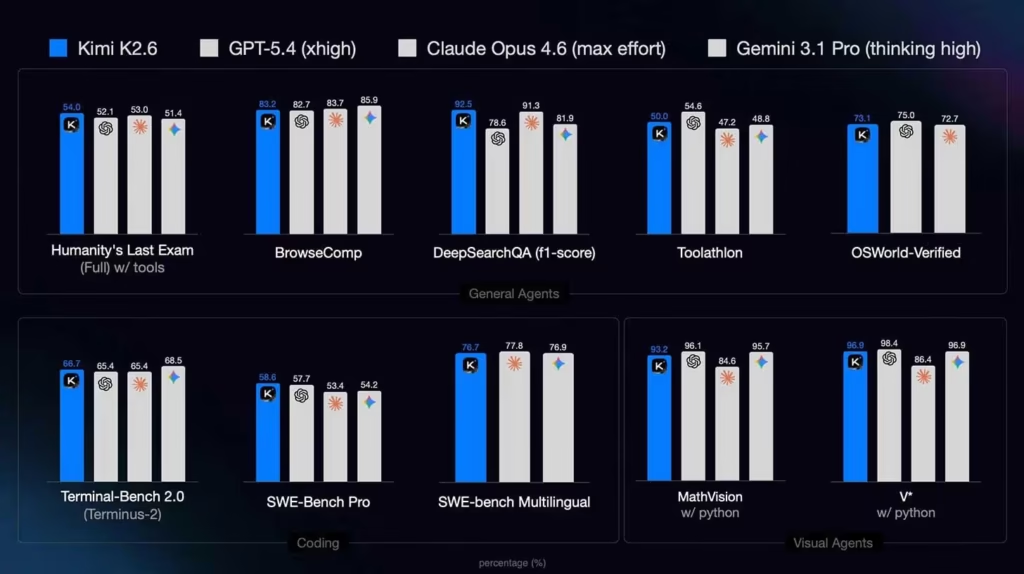

Moonshot AI’s Kimi K2.6 Closes Open-Source Gap 🚀

The open-source AI community has successfully caught up to the proprietary frontier labs. Moonshot AI has officially open-sourced Kimi K2.6, an incredibly powerful agentic coding model that meets and occasionally outperforms the industry’s most expensive closed systems.

Here are the staggering technical specs of the new open-weights champion:

- Benchmark Dominance: K2.6 delivers state-of-the-art performance at a fraction of the cost, beating top-tier models like GPT-5.4, Claude Opus 4.6, and Gemini 3.1 Pro on elite benchmarks like Humanity’s Last Exam (reasoning) and SWE-Bench Pro (coding).

- Long-Horizon Stability: The model excels at sustained, complex work. Demos show K2.6 operating for 12+ hours straight across 4,000+ tool calls, successfully refactoring massive, 8-year-old legacy codebases without losing context.

- Agent Swarms: Setting a new standard for horizontal scaling, K2.6 can spin up and coordinate 300 parallel sub-agents simultaneously to execute massive automated workflows.

- Ecosystem Ready: Always-on autonomous agents like OpenClaw and Hermes are already natively running on K2.6, with early tests confirming the model can operate entirely autonomously for five days straight without human intervention.

Why it matters: The narrative that open-source models are hopelessly trailing 12 months behind Silicon Valley giants has just been shattered. Kimi K2.6 provides enterprise developers with a highly reliable, cost-effective alternative for deploying autonomous agents. As the major proprietary labs increasingly throttle user access and hike API pricing for heavy coding sessions, open-source titans like K2.6 offer the ultimate, highly capable escape hatch for massive engineering workflows.

UrviumAI Take: Open-source inference is the ultimate equalizer. Stop burning your entire IT budget on premium API credits for long-horizon agentic tasks. Models like Kimi K2.6 prove that you can now run massive, multi-day coding and refactoring workflows on open-weight architecture without sacrificing performance. Transition your high-volume, repetitive agentic swarms to locally hosted or cloud-deployed open models immediately to drastically slash your operational costs.

Last AI News: OpenAI’s GPT-5.4-Cyber, Allbirds AI Pivot & Illinois AI Bill Clash

Other AI News Today:

- Apple officially announced that CEO Tim Cook will step down on September 1, 2026, with Senior Vice President of Hardware Engineering John Ternus named as his successor.

- Anthropic has deepened its partnership with Amazon, securing up to 5 gigawatts of compute capacity in exchange for a $100 billion AWS commitment.

- OpenAI launched Chronicle, a Codex preview feature for Mac Pro users that runs background agents to capture screen context and build persistent coding memories.

- Ex-Meta chief AI scientist Yann LeCun stated the public should ignore AI lab executives like Sam Altman and Dario Amodei regarding AI’s impact on the labor market.

- Tinder and Zoom partnered with Sam Altman’s “World” to allow users to verify their identities via iris scans to combat the massive rise of AI bots and deepfakes.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: OpenAI Images 2.0, SpaceX's $60B Cursor Deal & Meta Logs Keystrokes