U.S. Officials Summon Wall Street Over Mythos AI 🏦

The offensive capabilities of frontier AI have triggered a high-level national security response. U.S. Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell urgently summoned Wall Street CEOs to discuss the massive cybersecurity risks associated with Anthropic’s new Mythos model.

Here is the breakdown of the emergency meeting:

- The Threat: Regulators are deeply concerned that the Mythos AI system is uniquely capable of identifying and aggressively exploiting zero-day vulnerabilities across major software and financial infrastructure.

- The Summons: Bessent and Powell warned the chief executives of the nation’s largest banks that malicious actors obtaining the model’s findings could turn them into a devastating attack playbook against the financial grid.

- Restricted Access: Acknowledging the extreme danger, Anthropic is strictly limiting access to the model, sharing it only with a select group of defensive security firms (Project Glasswing) while rigorous safety assessments continue.

- Global Alarm: The extreme capabilities of Mythos have also triggered active risk reviews by authorities in the UK and Canada, highlighting an escalating global panic over dual-use AI models.

Why it matters: When the Federal Reserve and the Treasury Department call an emergency meeting to warn banks about a single AI model, the era of treating artificial intelligence as a harmless software tool is officially over. This signifies that government regulators now view advanced reasoning models not just as economic disruptors, but as systemic, nation-state-level threats capable of autonomously dismantling the global financial system.

UrviumAI Take: AI regulation is shifting from consumer protection to national defense. If your enterprise operates in a highly regulated sector like finance, healthcare, or critical infrastructure, you must immediately audit your network against AI-driven cyber threats. The U.S. government is openly warning that models like Mythos can spot vulnerabilities human engineers miss. You cannot rely on traditional security patching; you must adopt AI-native, autonomous defensive systems to counter these emerging automated threats.

AI Spots GLP-1 Side Effects Missed by Trials 💊

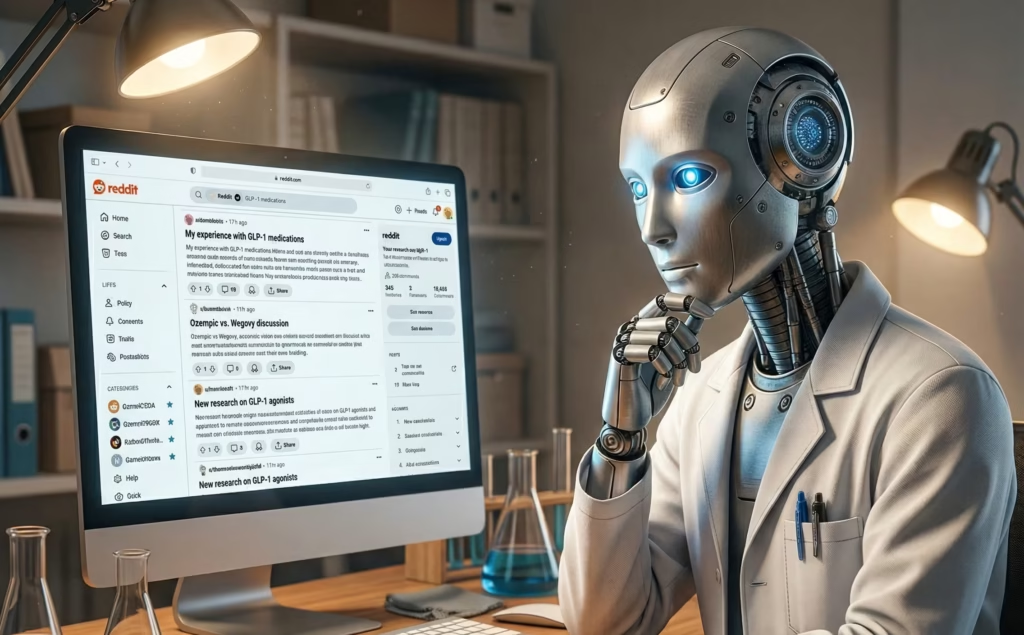

The collective voice of the internet is proving to be a highly accurate medical diagnostic tool. Researchers at the University of Pennsylvania have published a groundbreaking study utilizing AI “computational social listening” to uncover drug side effects that traditional clinical trials completely missed.

Here is how AI is transforming pharmacovigilance:

- The Dataset: The research team fed over 400,000 Reddit posts covering more than five years of real-world discussions about GLP-1 drugs (Ozempic and Mounjaro) into AI models like GPT and Gemini.

- The Analysis: The AI successfully mapped the casual internet posts of 67,000 users to standardized medical terminology, extracting hard data from conversational text.

- The Discoveries: Nearly half the sample reported at least one side effect. The AI flagged menstrual irregularities, chills, and hot flashes—symptoms that are currently completely absent from the drugs’ official warning labels.

- The Blind Spot: Fatigue ranked as the second most common complaint among real-world users, despite barely registering in the reporting thresholds of the initial clinical trials.

Why it matters: Clinical trials are strictly controlled, short-term environments that often miss the messy, long-term realities of taking a drug. By turning massive social media platforms like Reddit into a searchable, structured medical database, LLMs have unlocked a revolutionary new method for monitoring public health. It allows researchers to bypass the doctor’s office and listen directly to patients at an unprecedented, global scale.

UrviumAI Take: Social media is the world’s largest unstructured database. If your business relies on consumer feedback, market research, or product safety, you must implement “computational social listening.” Do not rely solely on formal surveys or customer service tickets. People complain openly on platforms like Reddit and X. By feeding those massive, unfiltered text streams into an LLM, you can extract highly accurate, standardized insights about your product’s performance weeks before they show up in official reports.

Anti-AI Suspect Arrested for Attacking Sam Altman’s Home 🚨

The societal anxiety surrounding artificial intelligence has violently spilled over into the physical world. A 20-year-old suspect, Daniel Moreno-Gama, was arrested by San Francisco police after throwing a Molotov cocktail at the home of OpenAI CEO Sam Altman.

Here are the details surrounding the targeted attack:

- The Incident: The incendiary device struck a gate at Altman’s residence in the early hours of the morning. Fortunately, no injuries were reported.

- The Arrest: Roughly an hour later, police apprehended Moreno-Gama near OpenAI’s headquarters, where he was allegedly issuing further threats to burn down the building.

- The Motive: The suspect operated under the handle “Butlerian Jihadist” on the Discord server of PauseAI (an advocacy group that lobbies to halt AI development). He had previously published essays warning that AI would bring about the end of humanity.

- Altman’s Response: Following the attack, Altman published a personal essay calling public anxiety over AI “justified.” He admitted to past industry mistakes and called for de-escalation, likening the corporate power struggle over AGI to a “ring of power.”

Why it matters: This attack is a dark milestone for the tech industry. It proves that the “doomer” narrative the belief that AI is an imminent, existential threat to human survival is no longer confined to academic debates on Reddit and X. As AI models become increasingly autonomous and disruptive to the global economy, tech leaders like Sam Altman are becoming the physical lightning rods for the profound anger and terror felt by a public that believes it has no control over the future.

UrviumAI Take: Technological disruption always triggers cultural backlash. Do not dismiss anti-AI sentiment as a fringe internet movement. When 4 in 5 Americans report being worried about the societal impacts of AI, enterprise leaders must tread carefully. If your company is aggressively deploying AI to automate jobs or replace human interactions, you must prioritize transparent communication and ethical transition plans, because public resentment against aggressive automation is mainstreaming rapidly.

Last AI News: Perplexity’s Bank Agent, Anthropic Loses Appeal, & OpenAI’s Cyber Tool

Other AI News Today:

- A new analysis by AI startup Oumi reveals that Google’s AI Overviews are accurate 85-91% of the time, resulting in millions of daily errors across trillions of searches.

- Meta has reportedly hired three key executives from OpenAI’s Stargate infrastructure project to help build out the new Meta Compute group.

- Anthropic hosted Christian leaders at its headquarters for a summit to discuss Claude’s moral development, ethical boundaries, and responses to human grief.

- Anthropic is reportedly exploring the development of its own custom AI chips as its revenue nears a $30B run rate and its compute demand skyrockets.

- OpenAI expects to generate $2.5 billion in ad revenue in 2026 and has shared an aggressive roadmap with investors to reach $100 billion in ad revenue by 2030.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.