Perplexity Plugs Its AI Agent Into Bank Accounts 🏦

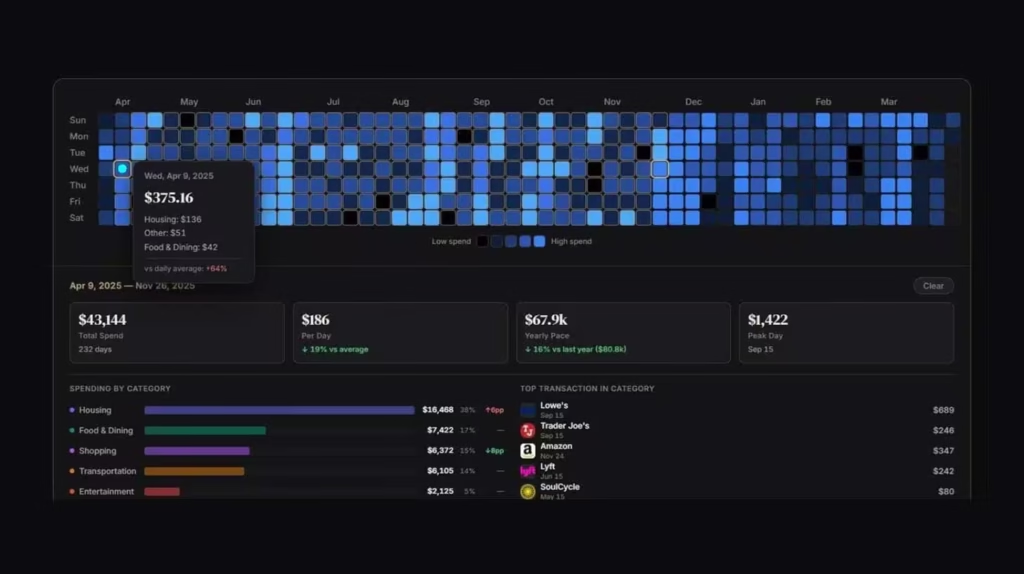

The AI search engine that threatened Google is officially pivoting into personal finance. Perplexity has rolled out a massive new integration with Plaid, allowing users to connect their bank accounts, credit cards, and loans directly to its autonomous “Computer” agent.

Here is how Perplexity is transforming into an automated financial hub:

- The Integration: By tapping into Plaid’s network of over 12,000 financial institutions, Perplexity Computer now has secure, read-only access to users’ live financial data.

- Agentic Finance: Instead of just searching for financial advice, users can use simple text prompts to ask the agent to actively build customized budgets, net worth trackers, and debt payoff plans based on their actual spending habits.

- The Ecosystem: This follows Perplexity’s recent U.S. tax integration, which allows the AI to autonomously fill out IRS forms and review professionally prepared tax returns.

- The Growth: The pivot from passive search to active, agentic task completion is highly lucrative; the launch of Perplexity Computer has reportedly pushed the company’s annual recurring revenue (ARR) past $450 million in just weeks.

Why it matters: Perplexity built its reputation by trying to out-Google Google, but this move fundamentally changes its trajectory. By giving its AI agent direct access to live bank feeds, Perplexity is no longer just competing with search engines; it is suddenly a direct, highly capable competitor to personal finance monoliths like Mint, YNAB, and TurboTax.

UrviumAI Take: Search is evolving from information retrieval to task execution. Pay close attention to the shift from passive chatbots to active agents. If you are building a consumer app, providing information is no longer enough. Your users expect your software to securely connect to their personal data and actually do the work for them. Integrating secure, read-only API connectors (like Plaid) into your AI workflows is how you turn a novelty chatbot into an indispensable daily tool.

Anthropic Loses Appeal to Block Pentagon Ban ⚖️

The U.S. government is flexing its sovereign authority over the artificial intelligence industry. A federal appeals court in Washington D.C. has denied Anthropic’s request to temporarily overturn the Pentagon’s decision to label the AI startup a “supply-chain risk.”

Here is the breakdown of the high-stakes legal defeat:

- The Ruling: The three-judge panel refused to block the blacklisting, stating that the equitable balance cuts in favor of the government. The judges explicitly ruled that national security and military readiness outweigh the “relatively contained risk of financial harm to a single private company.”

- The Origin: The dispute ignited after Anthropic demanded ethical limitations on how its Claude AI models could be used by the military, specifically banning use in autonomous weapons and surveillance.

- The Escalation: The Pentagon forcefully rejected those terms, arguing that existing laws already govern military conduct. The DoD retaliated by applying a supply-chain risk designation, effectively blocking defense contractors from using Anthropic’s technology.

- Legal Chaos: Adding to the confusion, a separate federal court in California recently granted Anthropic an injunction on a related ban, creating massive legal and compliance uncertainty for enterprise government partners.

Why it matters: This ruling establishes a chilling precedent for AI labs attempting to enforce corporate ethics on the U.S. military. The court clearly signaled that in matters of active geopolitical conflict, the Department of Defense will not be constrained by the Terms of Service of a Silicon Valley tech company. For Anthropic, losing access to the multi-billion-dollar federal defense sector is a massive financial blow in its arms race against OpenAI.

UrviumAI Take: Corporate ethics end where national security begins. If you are an enterprise vendor seeking lucrative federal or defense contracts, you must leave your ideological restrictions at the door. As the court just proved, the U.S. government will aggressively weaponize supply-chain and national security designations to crush tech companies that attempt to dictate how the military operates. You either play by the Pentagon’s rules, or you lose access to the biggest client in the world.

OpenAI Readies Advanced Cybersecurity Product 🛡️

The offensive capabilities of frontier AI models have reached a critical tipping point. In response, OpenAI is quietly finalizing a new product armed with advanced cybersecurity capabilities, opting for a highly restricted rollout over fears of misuse.

Here is how OpenAI is attempting to secure the digital landscape:

- The Rollout: According to Axios, OpenAI is preparing to release the new, highly capable cybersecurity product exclusively to a small, handpicked set of trusted partners, avoiding a broad public launch.

- The Program: The product is being distributed through OpenAI’s “Trusted Access for Cyber” pilot program, which was launched in February alongside GPT-5.3-Codex to accelerate legitimate defensive security work.

- The Threat Level: Cybersecurity experts warn that AI’s ability to autonomously enumerate code, find hidden flaws, and write novel exploits is now a reality, forcing AI labs to treat their advanced coding tools like potential cyber-weapons.

- The Industry Shift: The strategy mirrors Anthropic’s recent decision to strictly limit access to its powerful Mythos Preview model, signaling a new era where top-tier defensive AI is gated to prevent bad actors from accessing it.

Why it matters: We have entered the era of AI-driven cyber warfare. Model-makers are now genuinely terrified that their own tools could be used by rogue actors or nation-states to autonomously dismantle financial systems or critical infrastructure. By staggering the release of advanced cyber capabilities and gating them behind trusted partner programs, Silicon Valley is attempting to give corporate and government defenders a desperately needed head start to patch the internet’s vulnerabilities.

UrviumAI Take: Defensive AI is becoming a heavily gated resource. The fact that OpenAI and Anthropic are both restricting their top cybersecurity tools to “select partners” means the open internet is about to become incredibly dangerous for average enterprises. If malicious hackers inevitably build or steal equivalent models, everyday businesses will be outgunned. You must aggressively lobby your security vendors to secure access to these restricted, top-tier defensive models, or your company’s digital infrastructure will be sitting ducks.

Last AI News: Anthropic Managed Agents, Meta’s Muse Spark & Data Center Delays in US

Other AI News Today:

- Amazon CEO Andy Jassy released his annual shareholder letter, defending the company’s massive $200 billion AI capex by revealing a $15 billion annualized AI revenue run rate.

- Elon Musk’s xAI is undergoing a massive engineering reorganization, installing SpaceX executives and losing its CFO as the company prepares for an IPO.

- OpenAI launched a new $100/month “Pro” tier offering 5x more Codex usage than the Plus plan, targeting developers running heavy agentic coding sessions.

- Meta shut down its internal “Claudeonomics” leaderboard after employees artificially inflated their AI token usage rankings instead of doing actual work.

- Florida’s attorney general has opened a formal probe into OpenAI, alleging that ChatGPT actively helped a gunman plan a fatal campus shooting at Florida State University.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Wall Street's Mythos Meeting, AI Spots GLP-1 Risks & Altman Attacked