Anthropic’s Project Glasswing Shows Off Mythos AI 🛡️

The world’s biggest tech companies are joining forces to secure global infrastructure against artificial intelligence threats. Anthropic has introduced Project Glasswing, a massive cybersecurity coalition built alongside partners like AWS, Apple, Google, Microsoft, and Nvidia, powered by a new, highly restricted AI model called Claude Mythos Preview.

Here is why the Mythos model is sending shockwaves through the cybersecurity world:

- Unprecedented Capabilities: Mythos successfully flagged thousands of severe security flaws across every major operating system and browser, including zero-day bugs that had survived 27 years of human review and millions of automated scans.

- Benchmark Dominance: The model demonstrates massive performance leaps over Anthropic’s flagship Opus 4.6 and other frontier rivals across coding, reasoning, and vulnerability detection.

- Restricted Access: Because the model is so dangerously capable of finding software exploits, Anthropic is refusing to release it to the public. Instead, access is limited strictly to 12 launch partners and 40+ critical organizations for defensive security.

- Financial Backing: To accelerate the fortification of critical infrastructure, Anthropic is backing the initiative with up to $100 million in model usage credits.

Why it matters: If you ever wonder what the top labs are hiding behind closed doors, Mythos is the answer. The model is so advanced at exploiting software that releasing it publicly would be the equivalent of open-sourcing a cyber-weapon. By restricting Mythos to a closed coalition of corporate defenders, Anthropic is trying to patch the internet’s vulnerabilities before bad actors inevitably build an open-weight model with the exact same hacking capabilities.

UrviumAI Take: The AI arms race is fundamentally shifting from intelligence to security. Do not assume your legacy cybersecurity software will protect you from next-generation AI threats. If frontier models like Mythos can find 27-year-old bugs in minutes, malicious actors will soon use similar tools to dismantle your company’s digital defenses. You must urgently upgrade to AI-native, autonomous cybersecurity monitoring systems to fight fire with fire.

US AI Labs Unite Against Chinese Model Distillation 🛑

The global AI arms race has triggered a rare, unified alliance among fiercely competitive Silicon Valley rivals. OpenAI, Google, and Anthropic are actively cooperating to identify and block Chinese competitors from copying their proprietary models through a technique known as “adversarial distillation.”

Here is how the US tech giants are fighting back against IP exfiltration:

- The Alliance: Facilitated by the Frontier Model Forum nonprofit, the three major labs are swapping sensitive intelligence and data to detect unauthorized attempts to extract their high-level reasoning outputs.

- The Threat of Distillation: Foreign developers use top-tier US models as “teachers” to train their own cheaper “student” models. US officials estimate this illicit practice costs American labs billions of dollars in annual profits.

- The Catalyst: The urgency skyrocketed following the massive success of Chinese startup DeepSeek, which released open-weight reasoning models that closely matched US performance at a fraction of the R&D cost.

- The Guardrail Gap: Beyond economic loss, US labs warn that distilled models often bypass strict safety filters, potentially giving bad actors unfiltered access to biological weapon recipes or cyberattack assistance.

Why it matters: Silicon Valley has realized that building a smarter model isn’t enough if a foreign rival can perfectly clone its capabilities for pennies on the dollar. This unprecedented data-sharing agreement mirrors how the cybersecurity industry operates against hackers. By treating model distillation as a coordinated national security threat, US AI companies are desperately trying to slam the door on data exfiltration before their proprietary moats completely evaporate.

UrviumAI Take: Open-source mimicry is destroying proprietary margins. If your business is relying heavily on selling access to a proprietary AI model, you must recognize that your technological lead is incredibly fragile. As adversarial distillation proves, your competitors can and will extract your model’s capabilities to build a cheaper clone. You must build your competitive moat around proprietary enterprise data integrations and seamless user experience, not just raw model intelligence.

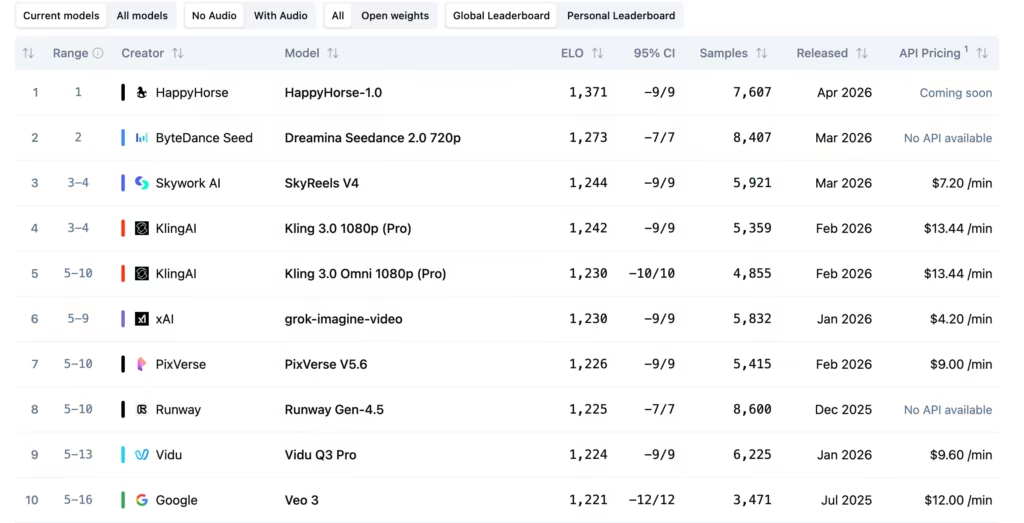

Mystery ‘HappyHorse-1.0’ Tops AI Video Leaderboards 🐎

An anonymous newcomer has just disrupted the generative video landscape. A mysterious new AI model named ‘HappyHorse-1.0’ has suddenly appeared on the Artificial Analysis leaderboards, immediately claiming the absolute top spot for video generation quality.

Here is what we know about the sudden leaderboard shakeup:

- The Rankings: HappyHorse-1.0 debuted at No. 1 in both Text-to-Video and Image-to-Video categories (no audio), cleanly dethroning ByteDance’s viral Seedance 2.0.

- Pseudonymous Drop: The model arrived without a team page, no brand attribution, and no official announcement, and was listed by Artificial Analysis as a “pseudonymous model.”

- The Specs: Third-party hosting sites suggest the model is a 15-billion-parameter single-stream Transformer with joint video-and-audio capabilities and impressive 7-language lip-syncing.

- The Speculation: Given the Year of the Horse timing and language priorities on demo sites, the prevailing industry theory is that HappyHorse-1.0 is an optimized, Asia-based model (potentially tied to Tencent or Sand.ai) quietly stress-testing user preferences.

Why it matters: The AI industry loves a mystery drop, but an anonymous model taking the #1 spot in highly competitive video generation is a massive signal. It proves that the barrier to entry for frontier-level generative media is dropping rapidly. Massive labs like OpenAI (who recently canceled Sora) and Google no longer hold a monopoly on visual fidelity; unknown entities can now deploy models capable of beating the biggest tech giants on Earth in blind, crowdsourced testing.

UrviumAI Take: Anonymity is the ultimate stress test. Pay attention to how fast the top spot changes in generative AI. The fact that a completely unknown, unbranded model can silently drop onto a leaderboard and beat multi-billion-dollar corporations proves that brand loyalty means nothing in AI; performance is everything. If you are building AI tools, you must constantly evaluate the open market, because the best model available tomorrow might not come from a company you’ve ever heard of today.

Last AI News: Solo Founder’s $1.8B AI Startup, Apple’s DRAM Squeeze & Anthropic Biotech

Other AI News Today:

- Apple has officially rolled out ChatGPT voice mode integration for Apple CarPlay in iOS 26.4, allowing drivers to interact with the AI hands-free.

- Anthropic’s revenue run rate has tripled to $30 billion as it signs a massive multi-gigawatt compute deal with Google and Broadcom for 2027.

- Intel has officially joined Elon Musk’s Terafab project, partnering with SpaceX, xAI, and Tesla to produce 1 terawatt of AI compute per year.

- Perplexity’s annual recurring revenue is projected to top $450 million, fueled by its new “Computer” AI agent and a shift to usage-based pricing.

- Elon Musk amended his lawsuit against OpenAI, seeking $150 billion in damages to be directed to the nonprofit arm while demanding Sam Altman’s removal.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: Anthropic Managed Agents, Meta's Muse Spark, Data Center Delays in US