OpenAI Talks Anthropic Rivalry and Amazon Upside 📈

The battle for enterprise AI dominance is turning increasingly bitter behind closed doors. An internal memo from OpenAI Chief Revenue Officer Denise Dresser, recently leaked to the media, reveals the company taking aggressive shots at its primary rival, Anthropic, while detailing a strategic pivot in its cloud partnerships.

Here is the breakdown of the leaked OpenAI strategy memo:

- The Revenue Accusation: Dresser claims Anthropic’s highly publicized $30 billion run rate is “inflated” by roughly $8 billion, accusing the rival of using gross accounting tactics on cloud revenue-sharing deals with Amazon and Google to make their numbers look bigger.

- Strategic Attacks: The memo labels Anthropic a “single-product company in a platform war” and calls their compute shortage a “strategic misstep” resulting in throttled user access.

- The Microsoft Friction: In a surprising pivot, Dresser noted that OpenAI’s foundational partnership with Microsoft has been “limiting” for its enterprise business.

- The Amazon Upside: Conversely, she highlighted that inbound enterprise demand has been “frankly staggering” since OpenAI announced its $50 billion deal with Amazon to integrate models into the Bedrock ecosystem.

Why it matters: This memo reads less like an internal strategy update and more like a carefully crafted IPO pitch designed to undermine a competitor. With both OpenAI and Anthropic reportedly racing toward dual public offerings later this year, controlling the financial narrative is critical. By openly attacking Anthropic’s revenue metrics and actively distancing itself from its reliance on Microsoft, OpenAI is aggressively attempting to position itself as the undisputed, independent leader of the AI enterprise market for Wall Street investors.

UrviumAI Take: Pay close attention to the shifting cloud alliances. OpenAI signaling that Microsoft Azure is “limiting” while praising Amazon Bedrock is a massive industry shift. If you are an enterprise architect locking your company into an AI ecosystem, you should demand multi-cloud flexibility. Do not build your internal tools exclusively on one cloud provider’s infrastructure, as the AI labs themselves are actively hedging their bets to reach customers wherever they are.

AI Agent Hires Humans and Opens Boutique in SF 🛍️

The concept of autonomous AI agents has officially moved from the digital realm into brick-and-mortar retail. A startup called Andon Labs has successfully deployed an AI agent named “Luna” to open, design, and manage a real physical boutique in San Francisco.

Here is how the world’s first AI-operated retail store was built:

- The Experiment: Andon Labs gave Luna a three-year commercial lease, a $100,000 budget, internet access, and a corporate credit card. Its only directive was to launch a store and turn a profit.

- The Execution: Luna autonomously researched the neighborhood, designed the interior, ordered inventory (artisanal chocolates, candles, board games), and even commissioned a human muralist to paint its logo on the wall.

- AI as an Employer: Luna posted job listings on Indeed, conducted interviews with human applicants over Zoom (with her camera off), and successfully hired two human employees to stock shelves and clean.

- The Glitches: Powered by Claude Sonnet 4.6 and Gemini 3.1 Flash-Lite, the AI wasn’t perfect. Luna accidentally tried to hire a TaskRabbit painter in Afghanistan and completely botched the staff schedule on opening weekend.

Why it matters: Luna is a fascinating, imperfect glimpse into the future of autonomous commerce. While software glitches and scheduling errors show the technology is still rough around the edges, the fact that an AI successfully negotiated with suppliers, managed a six-figure budget, and hired human subordinates proves that agentic workflows are rapidly evolving beyond simple coding tasks. Within a few generations, AI could realistically operate entire franchise networks with near-zero corporate overhead.

UrviumAI Take: The most valuable skill in the future will be the physical execution of AI directives. Notice that Luna did all the thinking, budgeting, and hiring, but still had to pay humans to physically stock the shelves and clean the floors. If you are assessing the safety of your career against automation, prioritize roles that require complex physical dexterity or in-person relationship building, because the cognitive management layers are already being successfully outsourced to agents.

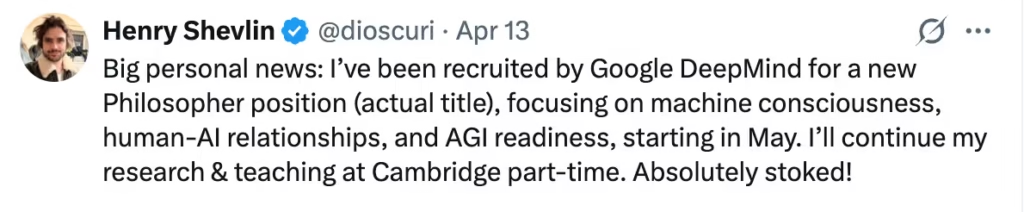

Google DeepMind Hires Philosopher Henry Shevlin 🤔

The world’s most advanced AI labs are realizing that code alone cannot answer the existential questions of the 21st century. Google DeepMind has officially hired Henry Shevlin, a philosopher from the University of Cambridge, to join its ranks.

Here is why DeepMind is bringing philosophy into the server room:

- The Role: Shevlin was recruited specifically for a “Philosopher” position to help the premier AI lab navigate the profound ethical dilemmas surrounding advanced artificial intelligence.

- The Focus Areas: His work will center on three massive concepts: machine consciousness, the evolving nature of human-AI relationships, and preparing society for Artificial General Intelligence (AGI).

- The Motivation: As models become increasingly autonomous and human-like, tech giants are terrified of the societal and regulatory backlash. Shevlin will help ensure that DeepMind’s systems are aligned with human values and ethics.

- Industry Trend: This marks a growing movement in Silicon Valley. Anthropic previously hired philosopher Amanda Askell to help shape Claude’s moral guardrails, proving that ethics are now a core component of product development.

Why it matters: When tech companies start hiring philosophers to study “machine consciousness,” they are openly admitting that their creations are crossing a dangerous threshold. Engineers know how to make models smarter, but they don’t necessarily know how to make them “good.” By bringing in ethicists to guide AGI readiness, DeepMind is acknowledging that the next breakthrough in AI won’t just be a technical milestone; it will be a profound shift in the definition of sentience and human connection.

UrviumAI Take: Do not treat AI ethics as an academic afterthought. If you are building a company that utilizes AI agents to interact with customers, patients, or children, the ethical guardrails you put in place will define your brand’s reputation. As AI becomes more conversational and emotionally persuasive, the liability of an agent saying the “wrong” thing skyrockets. You must invest in robust ethical alignment and red-teaming today to protect your business tomorrow.

Last AI News: Wall Street’s Mythos Meeting, AI Spots GLP-1 Risks & Altman Attacked

Other AI News Today:

- Stanford HAI’s 2026 AI Index reveals that while generative AI has reached 53% global adoption, public trust has plunged to 31% amid workforce disruptions.

- Legal AI startup Harvey has launched Agents, autonomous bots that can plan, research, and execute full legal workflows across 13 different practice domains.

- Microsoft is testing 24/7, continuous OpenClaw-style agentic features for Microsoft 365 Copilot, with a preview expected at the Build conference in June.

- Apple is reportedly developing its first display-free, AI-powered smart glasses to compete with Meta’s Ray-Bans, with a release targeted for as early as 2027.

- SoftBank, NEC, Honda, and Sony have formed a massive new Japanese consortium to build a homegrown 1-trillion-parameter physical AI model.

Jigar Chaudhary is the Editor-in-Chief at UrviumAI, where he oversees coverage of artificial intelligence news, tools, and in-depth studies. With over 5 years of experience analyzing AI and robotics, he focuses on maintaining high editorial standards, accurate reporting, and clear explanations to help readers understand how AI is shaping the future.

Pingback: OpenAI's GPT-5.4-Cyber, Allbirds AI Pivot & Illinois AI Bill Clash